Scoring in Cody

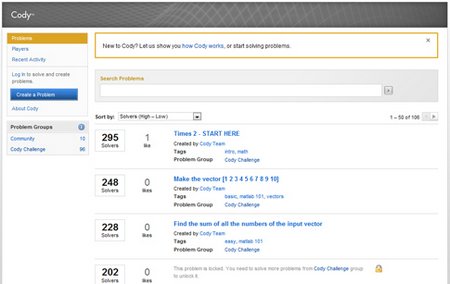

As Helen wrote last week, Cody is a new MATLAB-based game that’s available on MATLAB Central.

We’ve been happily surprised at the amount of activity on Cody. As of Monday morning, 700 people have created 130 problems and provided more than 17,000 correct answers (not to mention a good many incorrect ones).

We’ve gotten a lot of enthusiastic feedback about Cody, which we’re using to build the next set of features. Naturally, some of that feedback came in the form of complaints, and the loudest of these complaints went something like this (I’m paraphrasing here):

“Your scoring system is terrible. Cody promotes bad programming practice by encouraging them to write short, cryptic programs.”

What’s this person talking about? Does he have a point?

Let’s consider how scoring works on Cody. Imagine that you are the official Cody Scorer. People are bringing you dozens of solutions every minute, and they’re all crowding around you asking “Is this one good? How is this one?” It doesn’t take you very long to tell which answers are correct. But you’d like to provide more information than that. You’d like to rank the correct answers somehow. How should you quickly and consistently rank all these answers? Subjective measures like elegance and readability, while appealing, are clearly off the table. If we had an Elegantometer, we would use it. But we don’t. Should we use cyclomatic complexity? Or the number of Code Analyzer messages? What metric would you use?

We decided on code size as the least bad option. It has a lot going for it. It’s simple, objective, consistent, and granular. By “granular,” we mean that we get a reasonably smooth distribution of code sizes for any given problem, as opposed to two or three giant, uniform clusters.

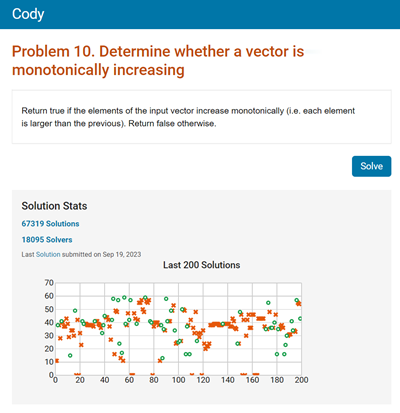

Now we have another complication. If we agree that code size is worth measuring, how shall we measure it? Lines of code? Bad idea. People will cram everything onto one line. Character count? No, because we don’t want to punish people for adding comments and giving their variables nice descriptive names. Again, the least bad option we came up with is to measure the size of the code after it’s been pre-chewed and made ready for digestion by the MATLAB interpreter. We count the number of nodes in the parse tree. How? There’s already a Cody problem on this topic. And if you want to try out the “official” scoring code yourself, you can find it on the File Exchange.

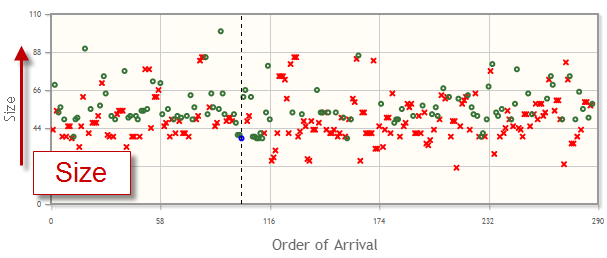

Any metric can be gamed. And we know that short code isn’t always better code, as you can see by looking at any obfuscated programming contest. But code is like medicine. If you can use less of it and still be healthy, you should. Which is to say, other things being equal (say, readability), shorter code generally is better. And size is certainly a useful dimension to refer to when reasoning about large numbers of programs. Here is a picture of 287 solutions to the Nearest Numbers problem.

The crucial point here is that Cody is just a game.

Over the last week we have learned that this game is good at motivating people to solve real problems, and that even as they scramble to come up with “winning” solutions in Cody, they are learning valuable programming practices. And most of all, people seem to be enjoying themselves.

So while some people are telling us that the scoring is bad, others tell us that they are learning a lot. What do you think?

- Category:

- Community,

- MATLAB Central,

- Programming

Cleve’s Corner: Cleve Moler on Mathematics and Computing

Cleve’s Corner: Cleve Moler on Mathematics and Computing The MATLAB Blog

The MATLAB Blog Guy on Simulink

Guy on Simulink MATLAB Community

MATLAB Community Artificial Intelligence

Artificial Intelligence Developer Zone

Developer Zone Stuart’s MATLAB Videos

Stuart’s MATLAB Videos Behind the Headlines

Behind the Headlines File Exchange Pick of the Week

File Exchange Pick of the Week Hans on IoT

Hans on IoT Student Lounge

Student Lounge MATLAB ユーザーコミュニティー

MATLAB ユーザーコミュニティー Startups, Accelerators, & Entrepreneurs

Startups, Accelerators, & Entrepreneurs Autonomous Systems

Autonomous Systems Quantitative Finance

Quantitative Finance MATLAB Graphics and App Building

MATLAB Graphics and App Building

Comments

To leave a comment, please click here to sign in to your MathWorks Account or create a new one.