Code Generation: Run MATLAB Code and Simulink Models Anywhere!

I would like to start by wishing all of you a Happy New Year! In the spirit of the New Year and trying out new things we want to introduce you to our latest series of student competition tutorials on code generation. Connell, our regular guest blogger (… we should call him co-author of this blog …) wrote this article.

What is Code Generation?

Code generation is the process of generating low-level code directly from a high-level programming language or modelling environment. But, why is this important? MATLAB is a multi-paradigm high-level programming language used by more than 2 million engineers and scientists world-wide. There are more than 5000 universities around the world where MATLAB is taught to students from Science, Technology, Engineering and Math disciplines. In my many conversations with MATLAB users, the most recurring theme is always “MATLAB and Simulink are my go-to to prototype and simulate algorithms”. But what next?

As a student competition team there is a good chance that you are working with embedded systems – tiny computers that are the brains of your racecars, airplanes, boats, robots or the various other thingamajigs that are trying to make this world a better place. So how do you take your algorithms from MATLAB desktop to these embedded systems? The answer is code generation. Through these video tutorials we hope to teach you how to convert your MATLAB and Simulink algorithms in low-level C/C++ source code that can be integrated into other independent code bases or executables that can be deployed directly to an embedded system.

How can it help you?

This tutorial series will teach you how to generate low-level code from MATLAB and Simulink, through the lens of a simple autonomous system. We created a ball-tracking system where a camera mounted on a servo motor is used to track a green colored ball. The embedded computer, is used to take in a data stream from the sensor (camera) identify the ball position and compute actuator commands to keep the object in the center of the camera image. This is the principle behind many autonomous systems – use sensor information to compute control commands. Some common examples are lane keep assist systems in self-driving cars where a camera detects lanes and steering commands are computed or an autopilot in cruise mode where an IMU is used to estimate orientation and wing flaps commands are computed to maintain a desired orientation.

Simulating the System

Simulation is the cheapest way to test algorithms. MATLAB and Simulink provide many different tools to design simulations so you can test different scenarios and edge cases enabling you to build robust code.

These tutorials follow the same approach. We started by trying to identify the ball. Once you download the free MATLAB Support package for USB webcams, sampling data from a camera, in MATLAB, is as easy as

vidObj = imaq.VideoDevice('winvideo',1); vidObj.ReturnedColorSpace = 'YCbCr'; vidObj.ReturnedDataType = 'uint8'; preview(vidObj);

Now that we have a data stream from the sensor, we tried a few different algorithms to achieve our objective – locate the ball in the image. After a few simulations we settled on a simple color thresholding algorithm that is efficient and serves our purpose.

Next, we moved on to the control aspect of our problem. We decided that a PID controller was the best control scheme for the low-level control of the motor. To simulate this system, we created a mathematical model of the motor in Simulink and using a random chirp signal and the auto-tune functionality of the PID block arrived at a set of P, I and D gains for this model. Watch this tech talk series to learn about the basics of PID control.

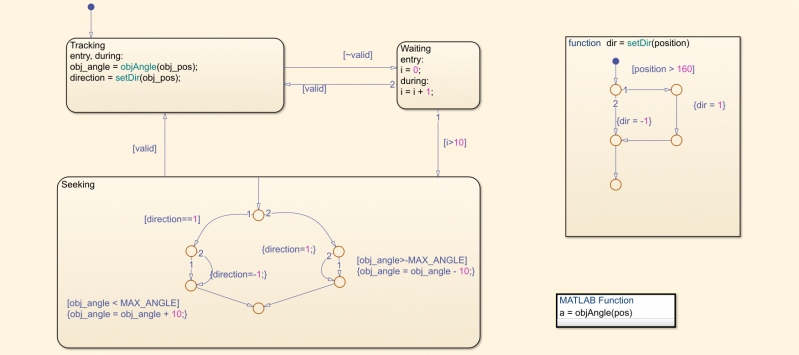

To define the input to our PID controller, or what I like to call the high-level control, we used Stateflow to define the different modes of operation – a seeking mode where there is no object in the camera frame and the motor will turn the camera to find the object, a tracking state where the object is detected and the motor will try to keep the object in the center of the camera frame and a default waiting mode to enable smooth transition between the other two modes.

Implementing on Hardware

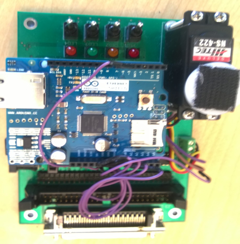

Now that we have 3 independently developed and tested modules, we need to implement the system on hardware. This is where code generation comes in. Working with hardware is usually the most challenging task for student competition teams. Selecting the right hardware is key. We decided on a Raspberry Pi Model 3 to process the sensor data and select the operating mode and an Arduino Uno for the low-level motor control. The Raspberry Pi and the Arduino are connected to each other via a serial connection through the USB port. Now we understand that for most teams MATLAB and Simulink are two tools in your toolbox and you may be using other programming languages to program your hardware but bear with me, these tutorials will not only teach you how to deploy code directly onto target hardware, but also how to generate code for individual modules that can be taken into a different programming environment and integrated independently of MATLAB or Simulink.

We will use Simulink, Simulink Coder and specific driver libraries for the Arduino and Raspberry Pi called Hardware Support Packages to deploy the code to the hardware with the click of a button. Hardware support packages are available for several hardware boards and can be found here (if you are looking for support for a board that is not supported currently, follow the steps on this page to get in touch with our development team). Download the library for free, follow to set-up steps and use it for your application! Once you have downloaded the library you will find a few blocks that help you interface with the different input and output ports on the board.

MATLAB code can be used in Simulink in a MATLAB Function block, so we created 2 Simulink models – the first with the 2 modules to be deployed to the Raspberry Pi and connected the input to the From Video Capture block and the outputs to the SDL Video Display block to visualize the camera feed and Serial Write block to communicate with the Arduino. If you followed the set-up steps correctly, hitting the “Deploy to Hardware” button will build this code directly onto the Raspberry Pi. The Arduino serial bus can only transmit data of “uint8” data type, so make sure you convert to the required data-type. This is where knowledge of the hardware you are working with is important.

The second model contains the low-level control module with the inputs Serial Receive and outputs to the Continuous Servo Write and Digital Output blocks from the Arduino Hardware Support Package. Plug the Arduino into your computer and the build the code. Simple ?

Conclusion

This tutorial series will also teach you how to optimize generated code for speed, memory usage, number of generated files etc. This allows you to generate code that is optimized for your application. Once the code has been built onto the hardware you can use a powerful Simulink feature called external mode. External mode as shown in the video below, allows you to run your algorithm on the hardware target while still interacting with it from Simulink. You can visualize outputs and the behavior of your algorithm to ensure it is doing what you want it to do.

We hope that this series will help you shorten your development time and enable you to use algorithms developed in MATLAB or Simulink without having to rewrite them in a low-level language.

So, what are you waiting for? Download the files, watch the videos and tell us your thoughts! Also, do not forget to check out the other complimentary video tutorial series created for student competition teams!

Cleve’s Corner: Cleve Moler on Mathematics and Computing

Cleve’s Corner: Cleve Moler on Mathematics and Computing The MATLAB Blog

The MATLAB Blog Guy on Simulink

Guy on Simulink MATLAB Community

MATLAB Community Artificial Intelligence

Artificial Intelligence Developer Zone

Developer Zone Stuart’s MATLAB Videos

Stuart’s MATLAB Videos Behind the Headlines

Behind the Headlines File Exchange Pick of the Week

File Exchange Pick of the Week Hans on IoT

Hans on IoT Student Lounge

Student Lounge MATLAB ユーザーコミュニティー

MATLAB ユーザーコミュニティー Startups, Accelerators, & Entrepreneurs

Startups, Accelerators, & Entrepreneurs Autonomous Systems

Autonomous Systems Quantitative Finance

Quantitative Finance MATLAB Graphics and App Building

MATLAB Graphics and App Building

コメント

コメントを残すには、ここ をクリックして MathWorks アカウントにサインインするか新しい MathWorks アカウントを作成します。