You Would Not Trust a Human Without Evidence. Why Trust an AI Agent?

Verification, standards, and the role of executable models in digital engineering

Trust Is Proven, Not Assumed

In the industries our customers operate in, including aerospace and defense, automotive, industrial automation and machinery, medical devices, and rail, trust is never assumed. It is proven.

These industries are built on an uncomfortable truth: humans make mistakes. Systems behave in unexpected ways. Interactions compound risk. That is why certification frameworks and standards such as DO‑178C for aerospace and defense, ISO 26262 for automotive functional safety, and IEC 61508 for industrial and railway systems exist. They are not bureaucratic overhead. They are how organizations enforce discipline, reduce risk, and deliver systems they can stand behind.

For background on these standards and how they shape engineering practice, see MathWorks resources for: Aerospace and Defense certification, Automotive ISO 26262, Medical Devices standards, and Railway systems standards.

These standards share common expectations. Engineering artifacts must be inspectable, reproducible, traceable, and verifiable. Behavior must be demonstrated, not asserted. Evidence must be regenerable on demand. Decisions must remain defensible years after they were made.

This discipline exists because complexity makes intuition unreliable.

AI agents do not change this reality. They reinforce it.

Why Agentic AI Makes Evidence More Important, Not Less

Agentic systems are inherently probabilistic. They can generate models, configurations, or architectures that look reasonable and run successfully. But plausibility is not proof.

AI can help create verification assets , including test cases, review checks, and automation logic. That is useful, and it will become an important part of engineering workflows. But those outputs are not self-validating. They still need to be trace to requirements, executable system behavior, and independently repeatable checks.

An AI agent can produce an artifact that executes while still violating assumptions, breaking traceability, or embedding subtle errors that only surface later during integration or certification review. It can also produce a test that appears reasonable but fails to exercise the right behavior, misses a boundary condition, or reflects the same flawed assumption as the generated implementation.

If we already do not trust humans to work without verification, it would make little sense to trust AI agents more.

The real question facing engineering teams is not whether to use agentic AI. Customers already are. The critical question is how to adopt agentic workflows without bypassing the safeguards their industries rely on.

What matters is not whether an AI agent can produce an output. What matters is whether that output is grounded in system context, constrained by engineering intent, and checked against evidence the organization can defend.

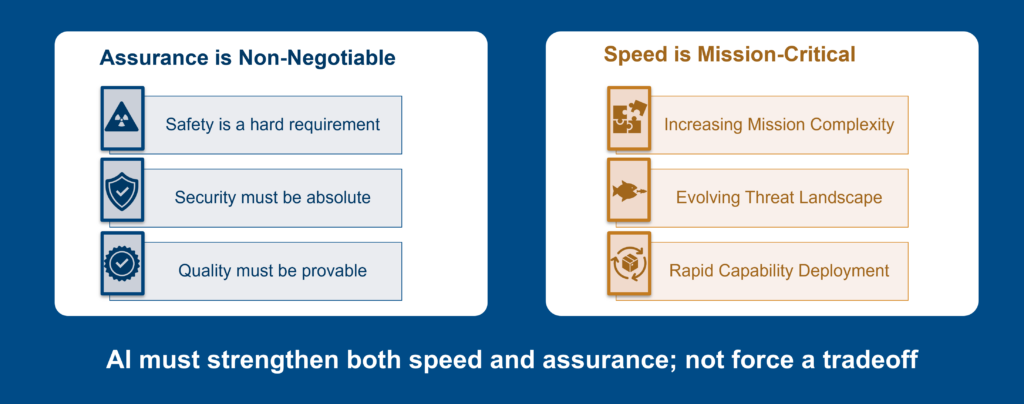

This is the same false tradeoff we see repeatedly between AI speed and engineering rigor. In reality, models and AI are force multipliers when used together, not substitutes.

Figure 1: Agentic AI should not force a tradeoff between speed and assurance. In engineering workflows, faster output only matters when it remains traceable, verifiable, and supported by evidence.

AI can increase engineering throughput. But more output does not automatically mean more clarity. Without traceability, configuration control, and repeatable verification, teams may simply create more artifacts that are harder to trust.

Digital Threads Need Executable Evidence

This is where digital engineering concepts explored recently in this Digital Engineering De‑coded blog series become essential rather than aspirational.

The digital thread exists to preserve intent, evidence, and accountability as work moves from requirements to architecture, design, implementation, verification, and validation. It helps engineering teams understand not just what changed, but why it changed, what it affects, and what evidence must be regenerated.

A digital thread that only connects static digital artifacts across tools can improve navigation, but it does not automatically create trust. Many organizations already have requirements databases, architecture views, models, PLM records, test results, documents, and review artifacts in digital form. That is progress. But if those artifacts remain disconnected from executable behavior, teams still need to manually interpret impact, rerun checks, and reconstruct the evidence needed to support a decision.

When change occurs, static links are not enough. Engineering teams need a way to evaluate impact, rerun analyses, regenerate evidence, and confirm that the system still behaves as intended.

That is where executable models become central.

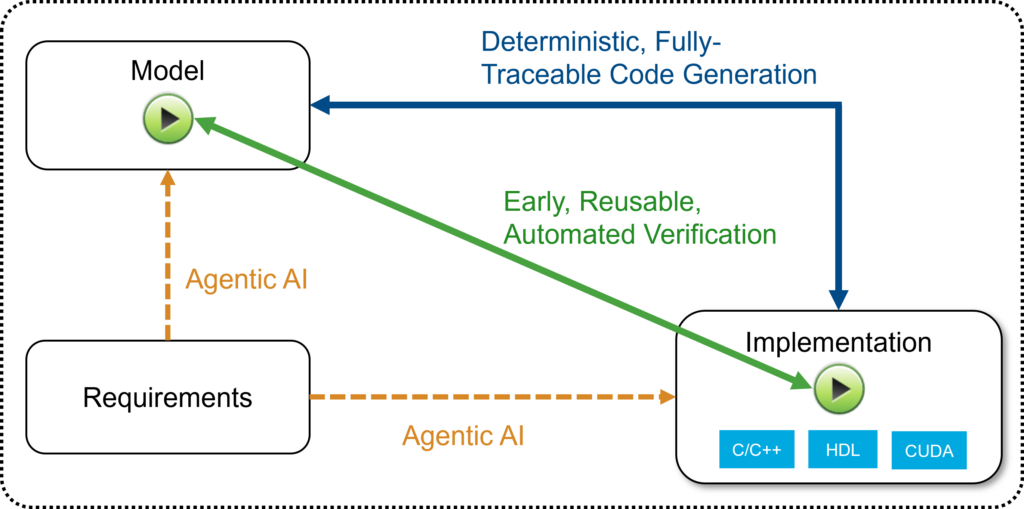

Executable models are not just documentation. They are living system representations that can be simulated, stressed, and verified continuously. When an AI agent modifies a model or generates an artifact, its contribution can be evaluated against system-level expectations. The model becomes the arbiter of engineering intent, not the agent’s output.

In this context, simulation is not merely a productivity tool. It provides an executable basis for checking whether AI-generated outputs are consistent with system intent, constraints, and expected behavior.

That distinction matters. In regulated and safety-critical engineering, a successful run is not the same as evidence. A generated artifact is not automatically acceptable because it compiles, simulates, or passes a narrow test. Teams need to understand the assumptions behind the result, the requirements it traces to, the scenarios it was checked against, and the evidence that can be regenerated when questions arise later.

An agent working outside the digital thread may be fast, but its output can become another disconnected artifact. An agent working inside a governed, executable workflow can help accelerate engineering while preserving the evidence needed to trust the result.

Figure 2: Executable models help ground AI-generated artifacts in system intent, behavior, and verification evidence.

Where Agentic AI Fits

This perspective aligns closely with Sarah Dagen’s recent post on combining Model‑Based Systems Engineering with agentic AI. In that workflow, agents orchestrate execution, while engineers retain responsibility for system intent, validation criteria, and certification readiness.

Agentic AI delivers value when it operates within executable, traceable workflows, not when it bypasses them.

A useful framing for agentic workflows is not simply keeping “humans in the loop,” but ensuring “humans are in the lead.” Engineers define intent, constraints, validation criteria, and acceptance thresholds. Agents help execute work within those boundaries.

This is where agentic AI can be powerful. It can help engineers find relevant information, generate candidate solutions, create tests, update models, automate repetitive steps, and explore design alternatives. But its value depends on the engineering workflow around it.

Emerging standards such as SysML v2 strengthen this foundation further by making system models more machine-readable and API-accessible. That matters because AI agents need context. They need to understand system structure, relationships, constraints, interfaces, requirements, and verification intent. Without that context, they are working from fragments. With that context, they can operate within a more meaningful engineering framework.

Grounded AI is not just AI with access to more data. It is AI connected to the right context, constrained by the right workflows, and checked against the right evidence.

Closing Thought

In regulated and safety‑critical domains, AI‑generated artifacts must be treated like any other engineering output. They must be reviewed, tested, verified, reproduced, and traced through the digital thread.

AI can help create models, scripts, tests, and other engineering artifacts. It can reduce repetitive work and help engineers move faster. But it cannot replace the need for evidence. It cannot remove the need for system context. It cannot make an unverifiable workflow trustworthy.

If your organization is exploring agentic AI, do not start by asking how autonomous the agent can be. Ask how grounded it is. Ask where it fits in the digital thread. Ask how its outputs are verified. Ask what evidence you will rely on when questions arise later.

The promise of agentic AI in engineering is not simply to do the same work faster. It is to reduce the hidden friction between idea, model, verification evidence, and engineering decision.

In engineering, trust is never declared. It is demonstrated.

コメント

コメントを残すには、ここ をクリックして MathWorks アカウントにサインインするか新しい MathWorks アカウントを作成します。