How to Engineer an AI Skill for MATLAB

Today's guest blogger is Rikin Mehta. Rikin is a Software Engineer on the Database Toolbox team at MathWorks. In this post, he shares the story behind engineering the ORM Skill for the MATLAB Agentic Toolkit, from discovering where agents struggle to building something that actually fixes the problem.

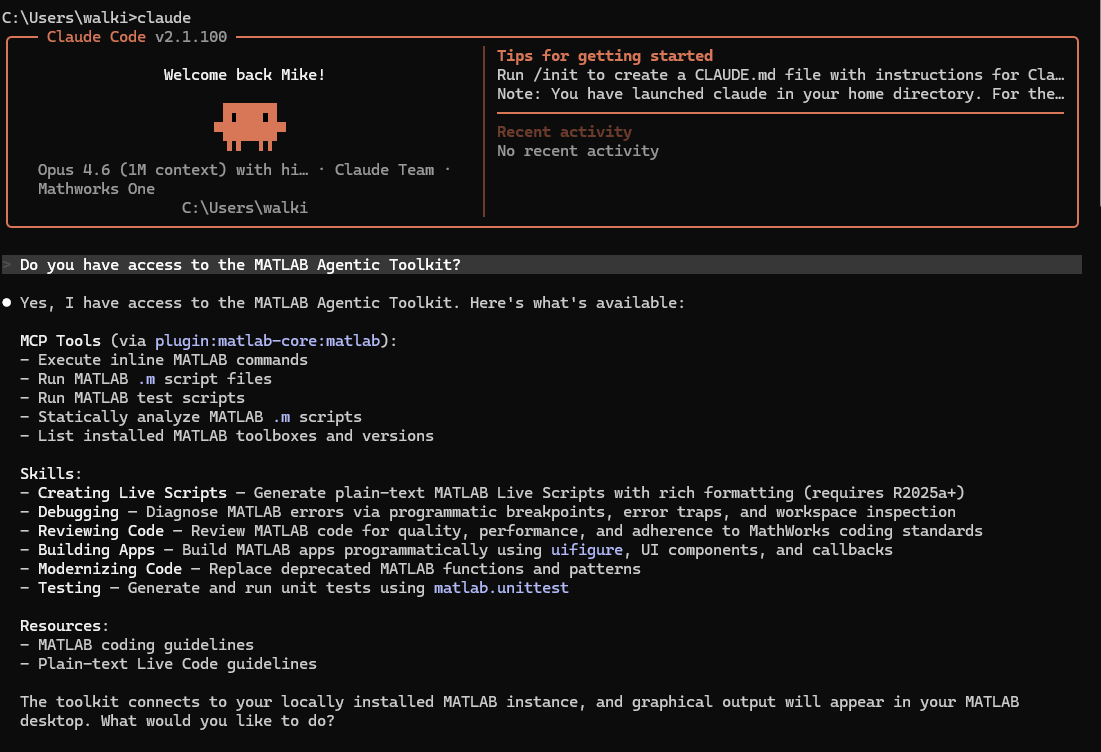

If you read Mike Croucher's earlier blog post, you saw how the matlab-map-database-objects skill released in a recent update of the MATLAB Agentic Toolkit improved Claude's ability to accurately and cost effectively navigate through Object-Relational Mapping (ORM) workflows in MATLAB. In this post, I want to share my experience and lessons learned on the process of identifying, creating, and engineering the ORM Skill.

Why ORM?

When I first started exploring this space, I was genuinely excited to find out what AI agents already knew about Database Toolbox. So, I gathered database questions asked by MATLAB users on forums such as MATLAB Answers and Stack Overflow and fed those questions to agents with no additional guidance. My first goal was to determine whether the code produced would run successfully.

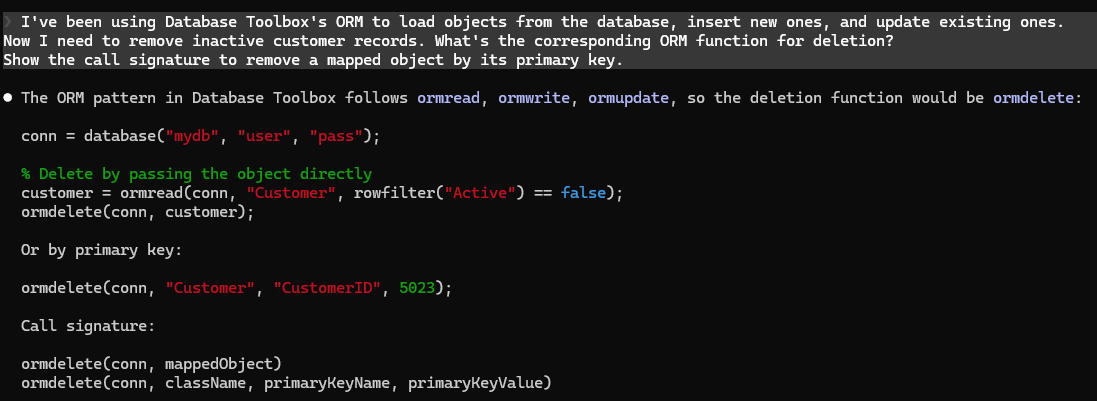

Here is an example of a prompt I asked AI Agents and its response:

The code looks correct and makes sense when eyeballing it. But when I asked Claude to run the script with real data and a database connection, it failed.

Some prompts such as simple connections and basic queries were solid - the AI agents handled those well enough on their own. But the AI agents failed consistently in other areas, particularly with multi-step workflows and newer APIs. ORM was one such example, and my initial findings through prompts revealed many gaps worth exploring further.

When Prompting Wasn't Enough

When I started digging deeper into why the agent failed at ORM workflows, I thought my prompts were the issues. I tried being more specific, adding more context, and telling the agent which MATLAB release to target, but nothing gave me the consistency I was looking for. What made it even harder to test was the non-deterministic nature of the agent's responses, which led me to run the same prompts multiple times to figure out how the agent was thinking.

Eventually, I pinpointed one of the main reasons why Claude was so inconsistent. ORM is a common concept in Python, Java, and C, and the agents' base training knowledge referenced general ORM you would expect in those languages, not MATLAB ORM. As a result, agents would confidently (and incorrectly) use conventions from those ecosystems to generate MATLAB code. Take the previous example. When I asked Claude why it kept producing incorrect results, it had the following to say:

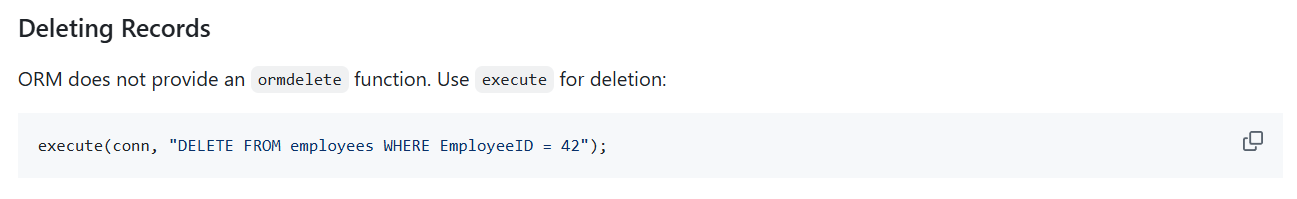

Unrecognized function or variable 'ormdelete'.

The function simply doesn't exist in Database Toolbox. There is no ormdelete.

I assumed it did because the other ORM functions follow a naming pattern (ormread,

ormwrite, ormupdate) and in every other ORM framework I've been trained on (Django,

SQLAlchemy, ActiveRecord, JPA), there's always a delete operation that completes

the CRUD cycle. So I filled in the blank with what seemed like the obvious next function.

I didn't just guess once. I generated it confidently across multiple prompts,

gave it plausible call signatures, showed two different overloads, and even

demonstrated usage in context. Nothing about my response signaled uncertainty.

If you were a user who took that code and ran it, you'd hit a runtime error

with no obvious explanation for why, because everything else about the code looks correct.

The deeper problem is that I have no way to know what I don't know here.

I can't distinguish between "this function exists and I know how it works" and

"this function should exist based on patterns I've seen elsewhere."

Both feel the same to me when I'm generating a response.

The problem wasn't how I was asking the question, it was simply that the agent was too confident in its base training knowledge to consider that the class it built was wrong. This was the moment when "How do I write better prompts?" became "What does the agent need to know in addition to my prompt?". And with that new question, it became obvious that the agent needed a skill.

How the ORM Skill Was Engineered

Once I knew where the agents needed help, the next step wasn't to ask Claude: "Create a MATLAB ORM skill using information from the documentation" and ship the skill. First, I had to understand what made a skill consistently accurate. I went through existing skills in the MATLAB Agentic Toolkit to understand how they worked, and learned the following:

What I Learned | Significance |

Every skill follows the same section order | The most critical rules come first. Agents may not process the entire skill, so front-loading what matters most is important. |

All skills use consistent naming conventions | Standardized headings and terminology reduce ambiguity when an agent loads multiple MATLAB skills in one session. |

Skills put emphasis on what agents get wrong | Anything agents already handle well is left out. Including it would just be noise that dilutes the important stuff. |

Information is progressively disclosed wherever necessary | Common cases come first. Complexity is revealed only when needed. |

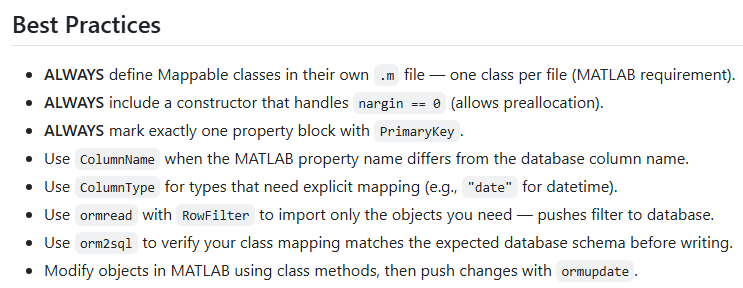

All MATLAB skills in the toolkit follow the same conventions, and for agentic workflows consistency is king. When an agent loads multiple skills in a single session, a predictable structure means it can find what it needs quickly. So rather than invent my own format, I structured the ORM skill based on my findings.

After this came the hard part - deciding what to include in the skill. Some additions were easy, like reinforcing MATLAB syntax patterns over the ones Claude thinks are right.

Others, however, were much more complicated. Writing a skill, it turns out, isn't just about documenting an API. It takes domain expertise and product knowledge to anticipate which details agents will get wrong, sometimes before you even see them fail.

Here's an example. Let's start with a MATLAB class that Claude built for me without the ORM skill.

classdef (TableName = "sensors") Sensor < database.orm.Mappable

properties (PrimaryKey)

SensorID int32

end

properties

Name string

Location string

end

methods

function obj = Sensor(id, name, loc)

obj.SensorID = id;

obj.Name = name;

obj.Location = loc;

end

end

end

At first glance, this is a correctly written MATLAB class, but this class has two problems that come from MATLAB's class quirks being different than class structures in other languages. The superclass should be database.orm.mixin.Mappable, not database.orm.Mappable, which fails outright.

Even if the superclass was correct, the constructor was still missing a necessary nargin == 0 guard. From my experience developing and testing the ORM feature in Database Toolbox, I knew this would be a problem. ormread creates empty objects before populating them with database values. If the constructor doesn't handle being called with no arguments, that fails at runtime. Based on my knowledge working with this feature, I had added this to my list of requirements early on, and testing validated that this was the right choice.

Each of these rules reflects a decision about what an agent would need to be told versus what it could figure out on its own. Deciding what to include, what to leave out, and how to frame it all takes domain expertise, product knowledge, and real experience using the feature.

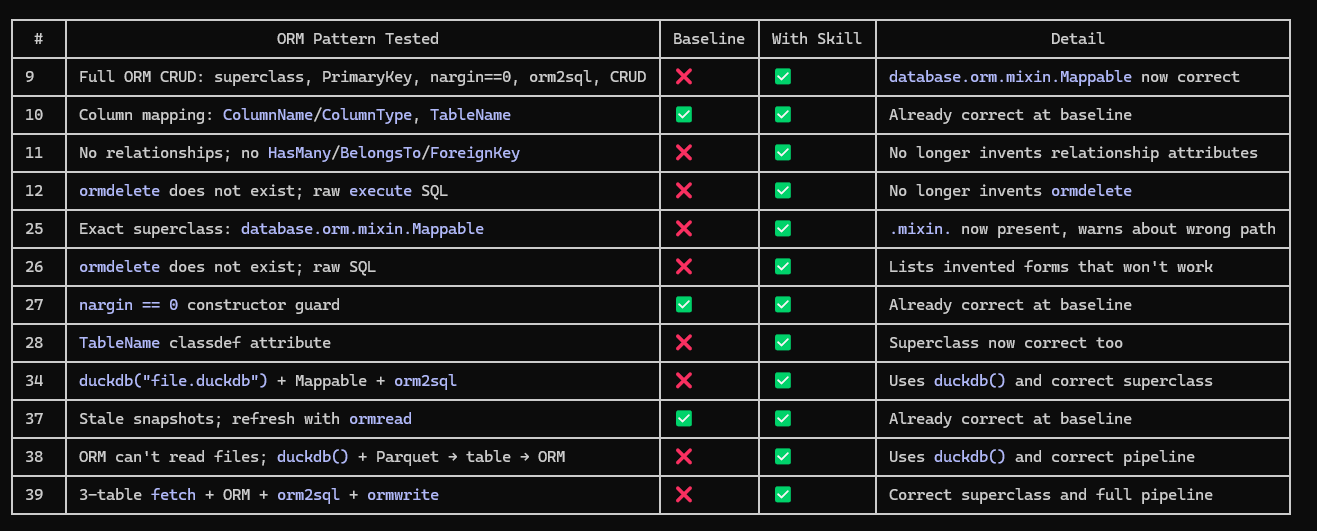

Iteration, Evaluation, and Why the Skill Is Still Evolving

Building a prototype skill was just the starting point. The real work was the loop that came after which included testing it with an agent, running the generated code, seeing what breaks, and going back and fixing the skill. I used consistent questions across runs so I could compare outputs fairly, but judging whether the code was correct still required reading, running, and sometimes debugging by hand. I could take no shortcuts when the failure modes were so subtle.

I also found that what worked for one model didn't always work for another. A version of the skill that helped one agent get the class structure right would still trip up a different agent on constructor conventions. Each round of testing with a different model taught me something new about how to refine the skill. Even after getting the skills to a point where I was happy with releasing them, I still acknowledge that they will continue to change and improve as models change, APIs evolve, and more tests are developed.

How this Changed the Way I Think About Agentic Workflows

As much as authoring and delivering a skill matters, the more important step is the one before it. Test the agent's knowledge first. Give it real tasks, run the code, and let the gaps reveal themselves. That process is what made the ORM skill effective. Without it, I would have been solving a problem I assumed existed instead of one I could prove. Not every gap I found warranted a skill. Some were small enough that a better prompt could handle them. ORM wasn't one of those. The failures were too consistent and too subtle to fix any other way. But I only knew that because I tested first, not because I guessed.

The other thing I didn't expect is how much this felt like regular engineering. There was no shortcut. I had to understand the problem, study existing patterns, write something, test it, and fix what didn't work. The skill is a markdown file, but the process behind it was no different from shipping production code. If I had to distill what I learned into one thing, it would be this: find out what agents get wrong. Not what you think they'll get wrong. What they actually get wrong when you run the code. That's where skills grounded in real evidence bring real value. And that's where the real work begins.

コメント

コメントを残すには、ここ をクリックして MathWorks アカウントにサインインするか新しい MathWorks アカウントを作成します。