The Road to AI Certification: The importance of Verification and Validation in AI

The following post is from Lucas García, Product Manager for Deep Learning Toolbox.

Artificial Intelligence (AI) is rapidly transforming our daily lives, from personal assistants on our smartphones to chatbots on customer service websites. As AI technology advances, it is increasingly being used in industries such as healthcare, aerospace, and automotive, where it has the potential to revolutionize the way we work and live. However, as AI use rises in production environments, there is a growing need to explain, verify, and validate model behavior, especially in safety-critical situations.

Safety-critical industries such as aerospace, automotive, and healthcare require AI models to be highly reliable and trustworthy because incorrect or biased decisions can have severe consequences.

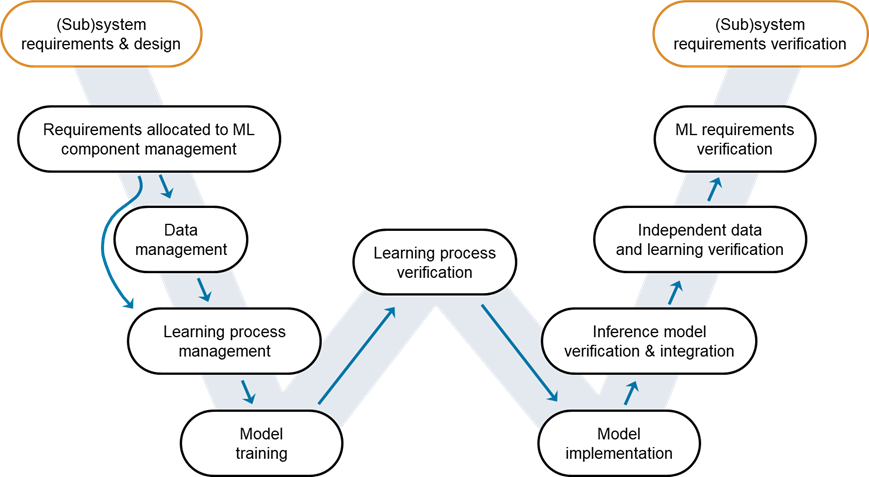

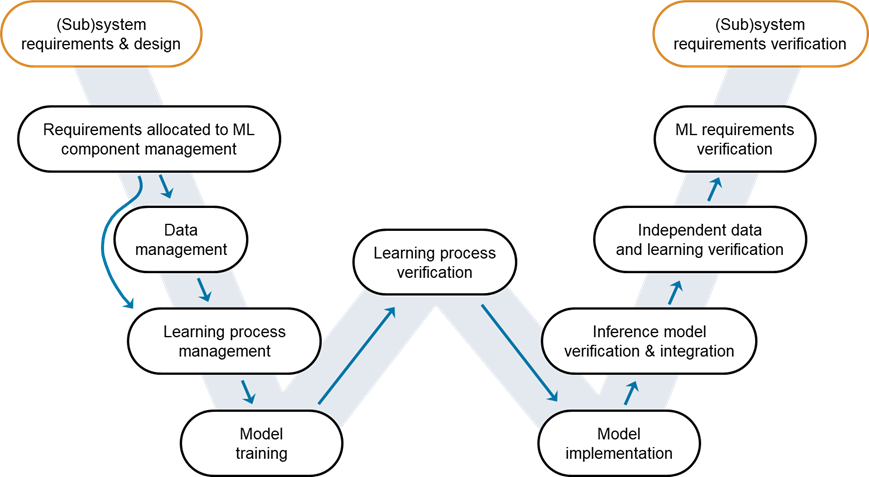

Figure 1: W-shaped development process. Credit: EASA, Daedalean

It is important to recognize that the W-shaped development process can coexist with the V-cycle, which is frequently used for development assurance of non-AI components. Additionally, although it may look like a linear workflow, it is iterative in nature. Training-triggered actions may take us from model training or learning process verification back to requirements allocated to ML component management, data management or learning process management. Similarly, implementation-triggered actions may take us back from ML requirements verification or independent data and learning verification back to requirements allocated to ML component management.

Although other variations to the W-shaped development process may be used and may also include higher level of detail, we will be using this version throughout the blog series for its simplicity and effectiveness in illustrating the adaptation of traditional workflows to AI applications.

Figure 1: W-shaped development process. Credit: EASA, Daedalean

It is important to recognize that the W-shaped development process can coexist with the V-cycle, which is frequently used for development assurance of non-AI components. Additionally, although it may look like a linear workflow, it is iterative in nature. Training-triggered actions may take us from model training or learning process verification back to requirements allocated to ML component management, data management or learning process management. Similarly, implementation-triggered actions may take us back from ML requirements verification or independent data and learning verification back to requirements allocated to ML component management.

Although other variations to the W-shaped development process may be used and may also include higher level of detail, we will be using this version throughout the blog series for its simplicity and effectiveness in illustrating the adaptation of traditional workflows to AI applications.

Figure 2: Verify an Image Classification Network

Figure 2: Verify an Image Classification Network

- In aerospace, incorrect AI decisions can lead to accidents or fatalities, compromising the safety of passengers and crew.

- In the automotive industry, faulty AI-enabled systems can lead to accidents or injuries, endangering the lives of drivers, passengers, and pedestrians.

- In healthcare, incorrect diagnoses or treatment plans made by AI systems can lead to patient harm or even death, jeopardizing the health and wellbeing of individuals.

Progress in AI Certification

To ensure the accuracy, reliability, and trustworthiness of AI-enabled systems in safety-critical industries, there has been significant progress in verifying AI through whitepapers, standards, and planning across industries. In the context of AI certification, V&V techniques will play a vital role in demonstrating that the AI model meets the necessary standards for safety and reliability. By applying V&V techniques, organizations can systematically verify the behavior of the AI model, identify any potential errors or biases, and validate its performance against predefined criteria. V&V techniques for AI may include various approaches, such as testing the AI model against representative datasets, conducting simulations or experiments to assess its performance, analyzing the model's decision-making process, and ensuring that it operates within acceptable bounds. The ultimate goal is to provide evidence that the AI-enabled system has been thoroughly tested and meets the identified requirements. This helps build confidence in the system’s accuracy, reliability, and trustworthiness, especially in safety-critical applications. You can learn more about progress made in AI certification by industry in the last section of this blog post.W-Shaped Development Workflow

Verification and validation of AI models are crucial in safety-critical industries to ensure the accuracy, reliability, and trustworthiness of AI-enabled systems. The progress made in developing standards and regulatory frameworks for AI in these industries is a significant step towards ensuring the safe and effective use of AI in various applications. It is important to note that traditional V&V workflows, such as the V cycle, might not be sufficient for ensuring the accuracy and reliability of AI models. In response to this, adaptations of these workflows emerged to better suit AI applications, such as the W-shaped development process. One example of this adaptation (see Figure 1) is the work done by EASA and Daedalean [3]. This work identified the need for learning assurance: the planned and systematic actions taken to substantiate, with an adequate level of confidence, that errors in a data-driven learning process have been identified and corrected. The ultimate goal of learning assurance is to ensure that the system satisfies the applicable requirements and provides sufficient generalization guarantees. This involves looking at the learning algorithms and data used for training, rather than just the lines of code being written in traditional software development practices. Therefore, the treatment of data is key to ensuring that the performance measured during development holds when the system is deployed to the field. Figure 1: W-shaped development process. Credit: EASA, Daedalean

It is important to recognize that the W-shaped development process can coexist with the V-cycle, which is frequently used for development assurance of non-AI components. Additionally, although it may look like a linear workflow, it is iterative in nature. Training-triggered actions may take us from model training or learning process verification back to requirements allocated to ML component management, data management or learning process management. Similarly, implementation-triggered actions may take us back from ML requirements verification or independent data and learning verification back to requirements allocated to ML component management.

Although other variations to the W-shaped development process may be used and may also include higher level of detail, we will be using this version throughout the blog series for its simplicity and effectiveness in illustrating the adaptation of traditional workflows to AI applications.

Figure 1: W-shaped development process. Credit: EASA, Daedalean

It is important to recognize that the W-shaped development process can coexist with the V-cycle, which is frequently used for development assurance of non-AI components. Additionally, although it may look like a linear workflow, it is iterative in nature. Training-triggered actions may take us from model training or learning process verification back to requirements allocated to ML component management, data management or learning process management. Similarly, implementation-triggered actions may take us back from ML requirements verification or independent data and learning verification back to requirements allocated to ML component management.

Although other variations to the W-shaped development process may be used and may also include higher level of detail, we will be using this version throughout the blog series for its simplicity and effectiveness in illustrating the adaptation of traditional workflows to AI applications.

Verify an Image Classification Network

Image classification networks are types of deep learning models that use convolutional neural networks (CNNs) to identify and categorize images based on their content. These networks have become increasingly popular in the field of AI due to their ability to accurately classify images and their potential to be used in a variety of real-world applications. Figure 2: Verify an Image Classification Network

Figure 2: Verify an Image Classification Network

- In the automotive industry, image classification networks can be used to classify objects on the road, such as other vehicles, pedestrians, and animals. These networks can help self-driving cars make informed decisions, avoid collisions, and improve safety.

- In the aerospace industry, image classification networks can be trained to identify and classify whether the image you are seeing is an image of an airport or not, runways, taxiways, etc., allowing for the automatic detection and mapping of airports. This technology can be used for a variety of applications, such as air traffic control, emergency response planning, and airport security.

- In the medical industry, image classification networks can be used to classify X-ray images to assist medical diagnosis. CNNs can detect patterns and anomalies in the images, helping doctors diagnose diseases and conditions, such as pneumonia, lung cancer, and bone fractures.

What’s next?

In coming blog posts, I’ll be going through the entire W-shaped Development Workflow for a deep learning model that identifies whether a patient is suffering from pneumonia or not by examining chest X-ray images. The model needs to be not only accurate, but also extremely robust since people’s lives are at stake. However, it’s worth noting that the techniques, workflows, and best practices we will be discussing for this example are also applicable to the other examples we’ve highlighted here. Stay tuned.Learn more about AI Certification progress made by each Industry

- In the aerospace industry, EUROCAE WG-114 / SAE G-34 joint international committee is expected to release the new Process Standard in late 2024, which will set the standard for development and certification/approval of aeronautical safety-related products implementing AI (ARP6983) [1]. This group already published in April 2021 an Aerospace Information Report Artificial Intelligence in Aeronautical Systems: Statement of Concerns (AIR6988) [2]. Additionally, EASA has published a concept paper titled “First usable guidance for Level 1 & 2 machine learning applications” [5], and the EASA roadmap 2.0 [6].

- In the automotive industry, the ISO Publicly Available Specification 8800 on Road Vehicles – Safety and Artificial Intelligence [5] is a work-in-progress standard that defines safety-related properties and risk factors impacting the insufficient performance and malfunctioning behavior of Artificial Intelligence (AI) within a road vehicle context. It describes a framework that addresses all phases of the development and deployment lifecycle. It will be complementary standard / publicly available specification to the currently existing ISO 26262:2018 and SOTIF (ISO 21448:2022), for the development of AI-based systems/components and will provide the guidance for the AI-based software lifecycle.

- In the healthcare industry, the FDA has released its first AI/ML-based software as a medical device action plan [6], which outlines a regulatory framework for AI-enabled medical devices. Moreover, the FDA recently issued a draft guidance to further develop a regulatory approach tailored to artificial intelligence/machine learning (AI/ML)-enabled devices to increase patients’ access to safe and effective AI/ML-enabled devices, in order to protect and promote public health. The draft guidance describes a least burdensome approach to support the iterative improvement of ML-enabled device software functions while ensuring their safety and effectiveness.

References

[1] Process Standard for Development and Certification/Approval of Aeronautical Safety-Related Products Implementing AI. ARP6983 (https://www.sae.org/standards/content/arp6983/) [2] Artificial Intelligence in Aeronautical Systems: Statement of Concerns AIR6988 (https://www.sae.org/standards/content/air6988/) [3] EASA Concept Paper: First usable guidance for Level 1 & 2 machine learning applications. February 2023. (https://www.easa.europa.eu/en/downloads/137631/en) [4] EASA Artificial Intelligence Roadmap 2.0, May 2023. (https://www.easa.europa.eu/en/downloads/137919/en) [5] ISO/AWI PAS 8800 Road Vehicles — Safety and artificial intelligence. (https://www.iso.org/standard/83303.html) [6] Artificial Intelligence and Machine Learning (AI/ML) Software as a Medical Device Action Plan. FDA. January 2021. (https://www.fda.gov/media/145022/download)

Comments

To leave a comment, please click here to sign in to your MathWorks Account or create a new one.