Guest Writer: Shweta Pujari Shweta Pujari is the Product Manager for AI and GenAI. In this blog post, she joins me to demonstrate how to use the new MATLAB MCP Client to build agentic... read more >>

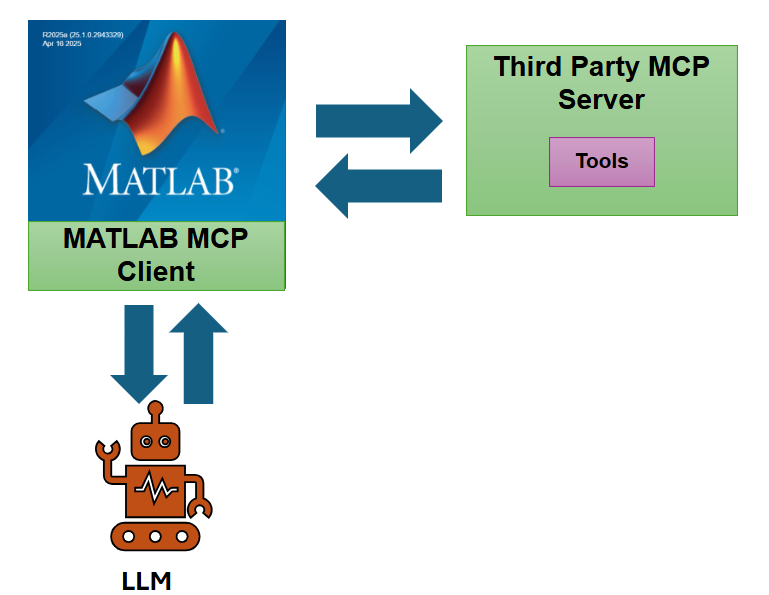

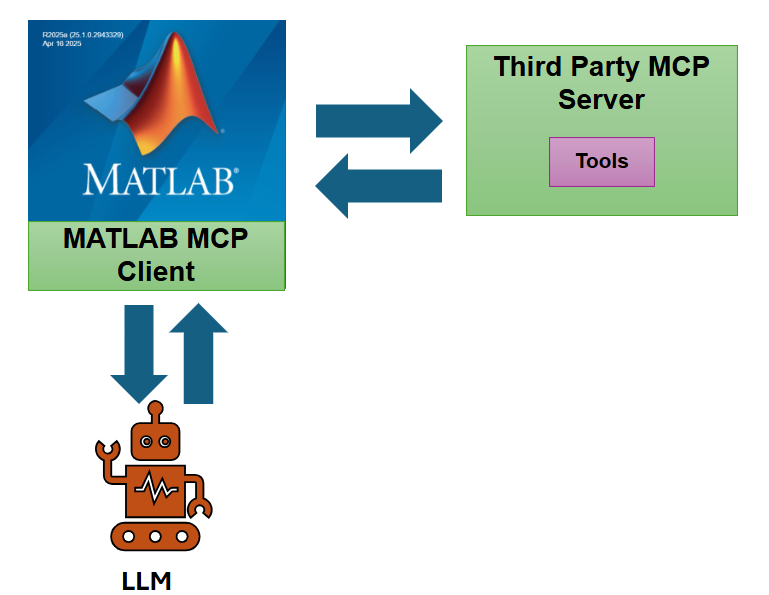

Guest Writer: Shweta Pujari Shweta Pujari is the Product Manager for AI and GenAI. In this blog post, she joins me to demonstrate how to use the new MATLAB MCP Client to build agentic... read more >>

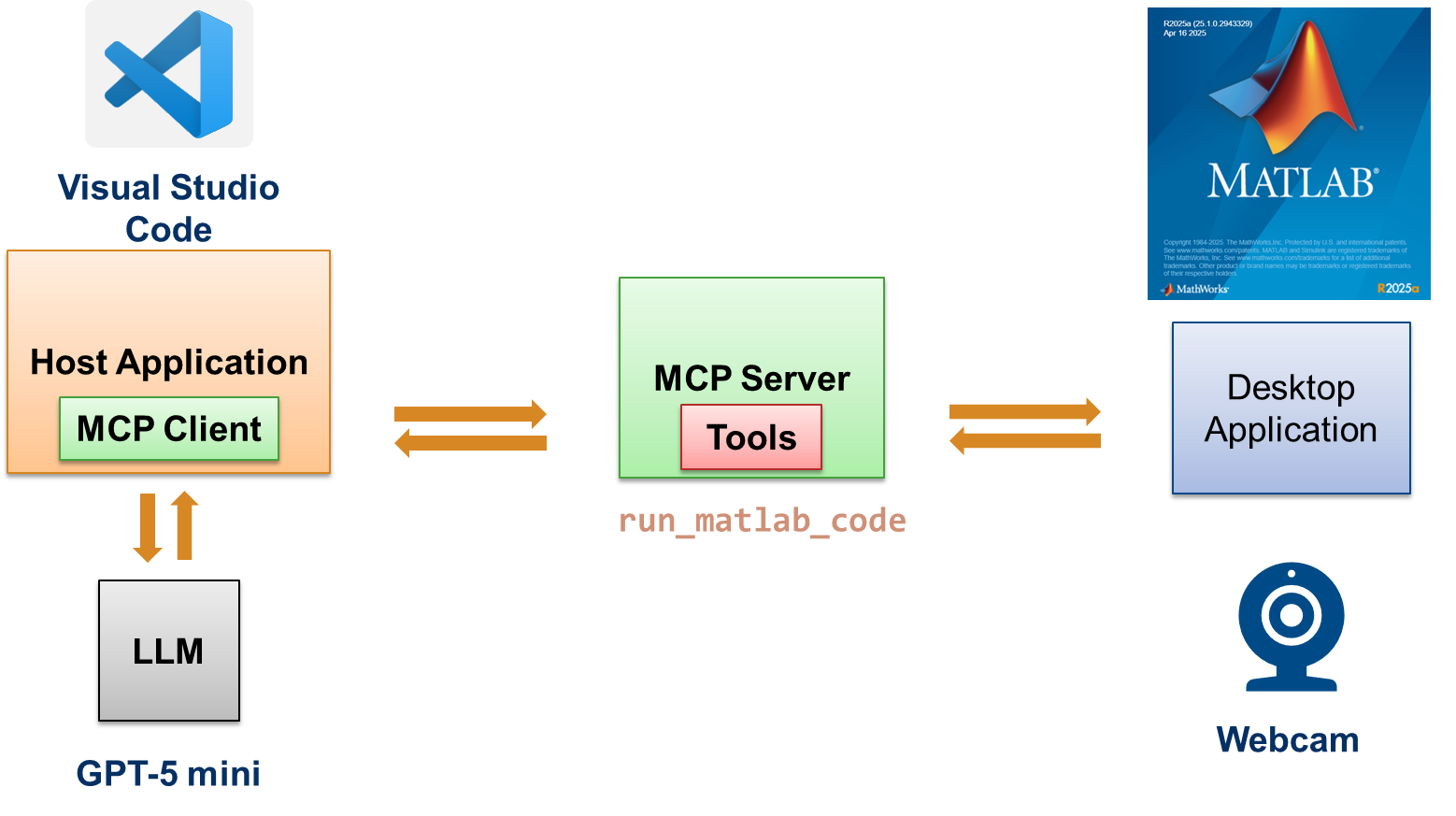

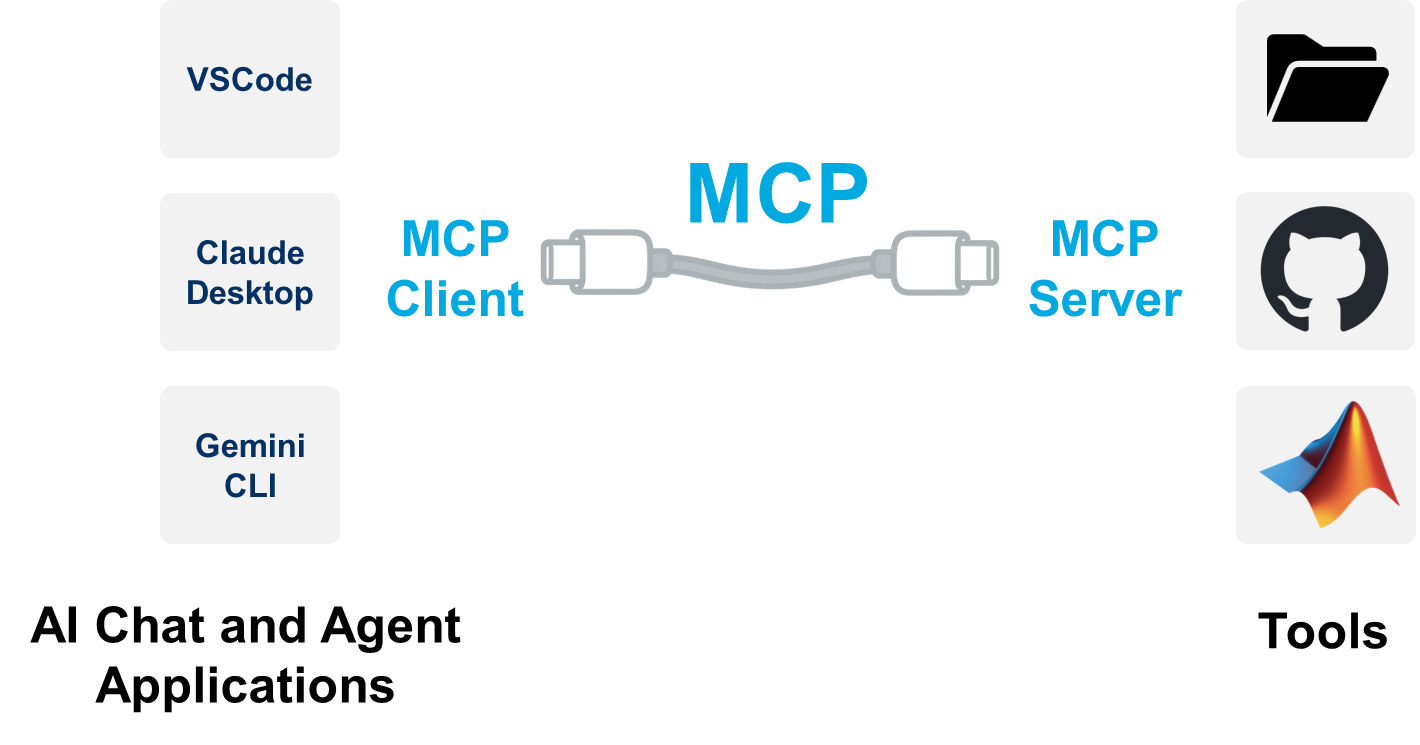

Guest Writer: Jacob George Jacob George is the Product Manager for Data Acquisition Toolbox, Image Acquisition Toolbox, and ThingSpeak. In this blog post, he discusses MATLAB MCP Core... read more >>

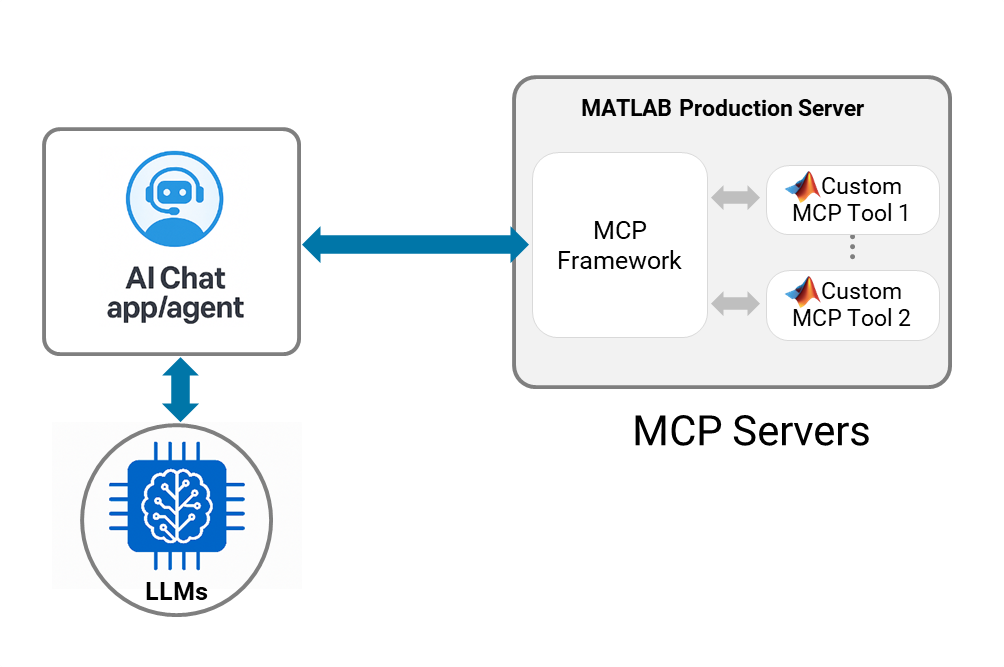

This Monday, we are releasing the new MCP framework for MATLAB Production Server on GitHub: https://github.com/matlab/mcp-framework-matlab-production-server Guest Writer: Lawrence... read more >>

This blog post is co-authored with Akshay Paul, the product manager for the new MATLAB MCP Core Server that released on Friday 31st of October on... read more >>

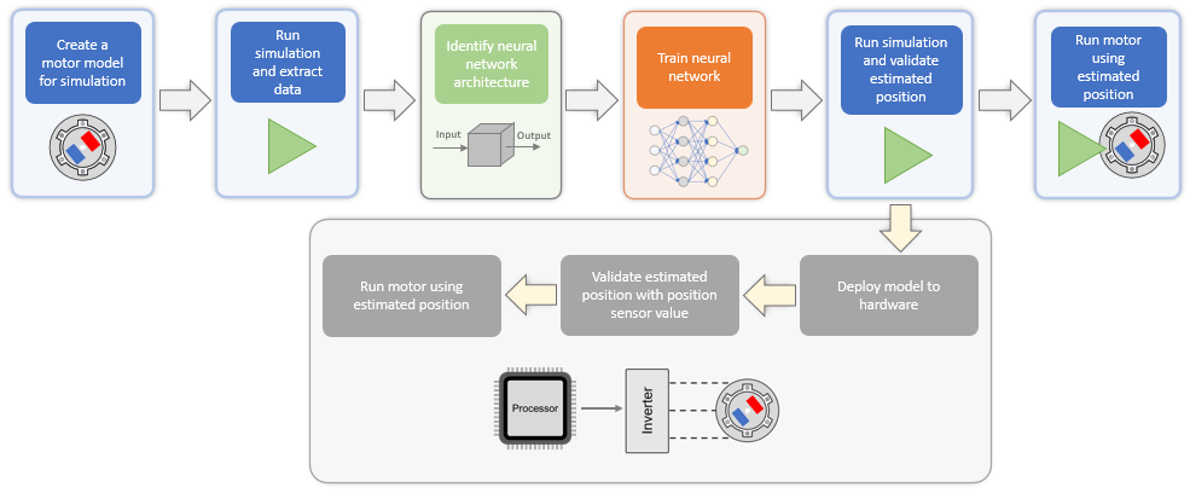

I am a MATLAB user. Even though I am familiar with the idea of Control Systems, I do need some help from my colleagues to work on projects that involve embedding AI into hardware. This example from... read more >>

This blog post is from Cory Hoi, Engineer at MathWorks Engineering Development Group. In our previous blog post, we used the Video Labeler app to segment both the tennis ball and the... read more >>

This blog post is from Cory Hoi, Engineer at MathWorks Engineering Development Group. With the rapid advancement of artificial intelligence (AI), harnessing its power is now more... read more >>

MATLAB Online is a very convenient platform for working with MATLAB and Python® together. And I am not only saying this because I was part of the MATLAB Online product team until fairly recently (ok,... read more >>

As you might have read from my dear colleague Mike on the MATLAB blog, MATLAB now slots neatly into Google® Colab. Google Colab is a great sandbox for demos, workshops, or quick experiments.... read more >>

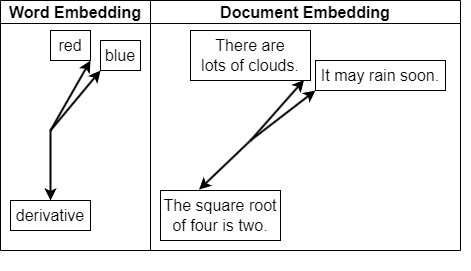

Natural language is the foundation of human communication, but it’s unstructured and full of nuance. Synonyms convey similar ideas, a single word can carry multiple meanings, and language... read more >>

These postings are the author's and don't necessarily represent the opinions of MathWorks.