FFT Stories

My last meeting of the day ended just a little while ago, and I was starting to think seriously about figuring out a blog post for this week. What to write about? That's when I happened to see that Cleve posted just yesterday about the FFT. Ah ha! I'll write about that, too (in honor of National Blog About the FFT Week).

Contents

To Recurse or Not?

I have three little sort-of stories to tell, the first of which was inspired directly by Cleve's post. Cleve described a very compact, elegant implementation of the divide-and-conquer idea that's central to fast Fourier transform algorithms. Here's the key code fragment of Cleve's textbook function ffttx:

% Recursive divide and conquer

k = (0:n/2-1)';

w = omega .^ k;

u = ffttx(x(1:2:n-1));

v = w.*ffttx(x(2:2:n));

y = [u+v; u-v];Function ffttx calls itself recursively, twice. Each recursive call is on half of the original input values. Then ffttx does a litte extra computation at the end to produce the final result.

Like Cleve, I really love this little bit of code. But it makes me think back to all the FFT code that I saw back when I was first learning digital signal processing. (That would be the mid- to late-1980s.) Here's a typical textbook sample from the 1989 edition of Discrete-Time Signal Processing by Oppenheim and Schafer):

This code is typical for the time. The focus is on doing the computation in-place and using as few multiplies as possible. One thing you don't see here: explicit recursion! The function DITFFT does not call itself, either directly or indirectly. That was typical for the time. No one wanted to use the memory to make copies of the two half-length arrays, and no one wanted to incur the speed penalty of many recursive function calls. Another typical thing you see in this code is something charmingly called bit-reversed sorting, but that's a topic for another day (maybe).

Anyway, my point is that modern FFT implementations built for today's computer architectures often do make use of explicit recursion. The additional cost of dividing the input array into two or more smaller copies is more than offset by the gains of more cache-friendly execution as the divide-and-conquer problems get smaller and smaller.

Butterflies and Laws

My second little story was inspired by one line of code that Cleve posted:

y = [u+v; u-v];

In the case where u is x(1) and v is x(2), then the computation above is just the 2-point DFT of x.

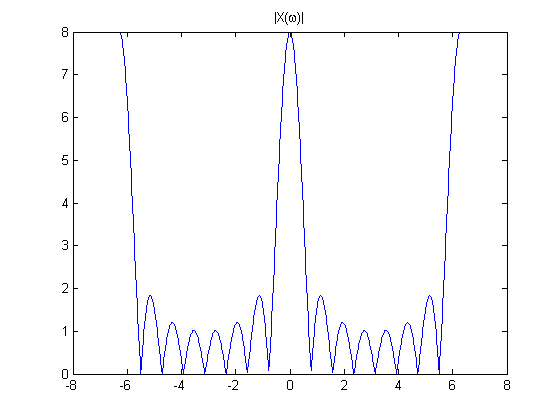

A signal flow diagram for it looks like this (also from Discrete-Time Signal Processing):

Digital signal processing experts have for decades referred to this little graph as a "butterfly diagram" because of its appearance.

Well, to make a little extra money for myself in grad school, I wrote a couple of chapters of the solutions manual for Discrete-Time Signal Processing. One of the chapters covered FFTs. This effort left me completely fed up with drawing butterfly diagrams and counting multiplies. One day I posted the following chart outside my cubicle:

Yes, digital signal processing people even call that mess above a "butterfly diagram." We're a weird bunch.

That butterfly diagram indirectly leads me to my third little FFT story.

The Slowest Way (Ever?) to Fill a Vector with NaNs

About ten years ago, a tech support case was forwarded to me. A customer complained that MATLAB was very slow in computing the FFT of one specific vector. Other vectors of the same length would go really fast, but not this one.

So I loaded the customer's MAT-file containing the vector and started running FFTs on it. To my surprise, it really was slow! I scratched my head. Then I looked at the output values, and I was even more surprised to see that they were all NaNs! Strange! What was the point? And was this related to the speed issue?

I looked at the long input vector provided by the customer, and I found that just one of the input values was NaN.

Light bulb!

Take a look again at that complicated butterfly diagram above. Notice that every output value depends on every input value. That's true in general for FFT computations of any length. With the way NaNs propagate through arithmetic operations, that implies that just one NaN in the input vector means that the output of fft will always be completely filled with NaNs.

That was related to the speed issue, as it turned out. NaN calculations often are executed in a less-well optimized part of the CPU, or even in software. Lots and lots of NaN calculations bogged down this particular customer's problem.

FFTs are a never-ending source of fun and mystery!

I have written quite a few blog posts about Fourier transform theory and algorithms. You can see them all in the Fourier transforms category.

PS. If you have ideas for even more fun ways to create a vector of NaNs, be sure to add them to the comments on this post.

댓글

댓글을 남기려면 링크 를 클릭하여 MathWorks 계정에 로그인하거나 계정을 새로 만드십시오.