About that dress …

This afternoon my wife looked at me with an expression indicating she was convinced that I had finally gone completely and irrevocably crazy.

I think it was because I was carrying from room to room a flashlight, a candle, a lighter, and a chessboard scattered with colored craft sticks and puff balls.

"It's about that dress," I said.

Yes, that dress. #TheDress, as it is known on Twitter.

Three nights ago I idly opened Twitter to see what was up. The Internet was melting down over: a) llamas, and b) a picture of a dress. (I have nothing more to say here about llamas.)

This is the picture:

Some people see this dress as blue and black. Some see it as white and gold. Each group can't understand why the others see it differently.

By Friday afternoon, a myriad of explanations had popped up online and on various news outlets. Mostly, I found these initial attempts to be unsatisfying, although some better explanations have been published online since then.

Initially I didn't want to write a blog about this, because (as I often proclaim) color science makes my brain hurt. But I do know a little bit about how color scientists think, having worked with several, having read their papers and books, and having implemented their methods in software. So, here is my interpretation of this unusual visual phenomenon. It's in three parts:

- The influence of illumination

- The phenomenon of color constancy

- How two different people could arrive at dramatically different conclusions about the color of that dress.

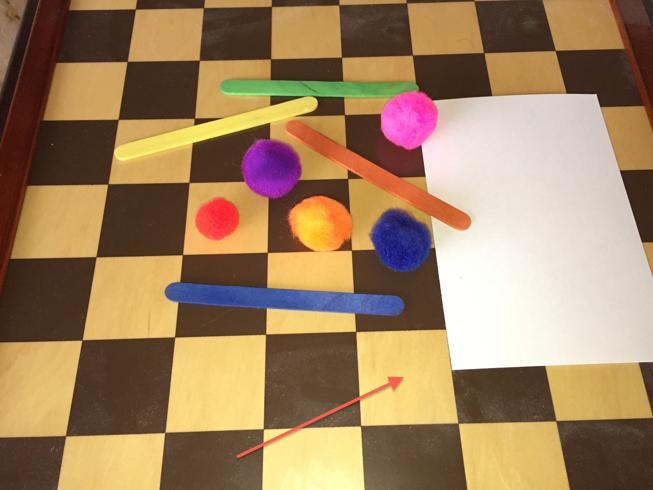

Let's start with the influence of illumination. Here is a small portion of a picture that I took today.

"Sage green," my wife said.

And here's a portion of a different picture.

"That's yellow," came the answer.

The truth: these two colors are from the same location of the same object. Here are the two original images with the locations marked.

Image A

Image B

The chessboard and other objects in these pictures are the same. The difference between the two images is caused entirely by the different light sources used for each one. Just for fun here are the colors of the puff ball on the upper right from two different pictures. (Remember, these are pixels from the exact same spot on the same object!)

The color of the light arriving at the camera depends not only on the color of the object, but also on the nature of the illumination. As you can see in the colored patches above, changing the illumination can make a big difference. So you cannot definitively determine the dress color solely from close examination of the digital image pixels.

Look at Image A again. What is the color of the index card?

Most people would call it white. If you look at just a chunk of pixels from the center of the card, though, it looks like a shade of green.

People have an amazing ability to compensate automatically and unconsciously for different light sources in a scene that they are viewing. If you looked at the same banana under a bright fluorescent light, and in candle light, and in the shade outdoors under a cloudy sky, you would see the banana as having the same color, yellow, each time. That is true even though the color spectrum of the light coming from the banana is actually significantly different in these three scenarios. Our ability to do this is called color constancy.

Our ability to compensate accurately for illumination depends on having familiar things in the scene we are viewing. It can be the sky, the pavement, the walls, the grass, the skin tones on a face. Almost always there is something in the scene that anchors our brain's mechanism that compensates for the illumination.

Now we come back to the photo of the dress. The photo completely lacks recognizable cues to help us compensate for illumination. Our brain tries to do it anyway, though in spite of the lack of cues. The different reactions of people around the world suggest that there are two dramatically different solutions to the problem.

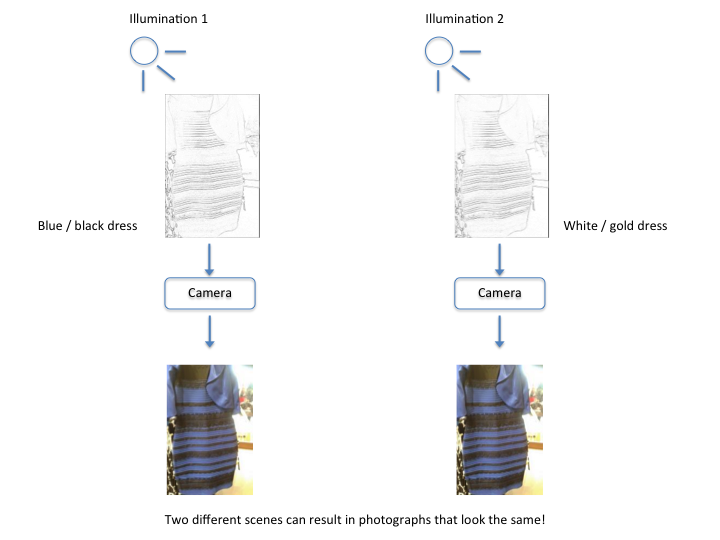

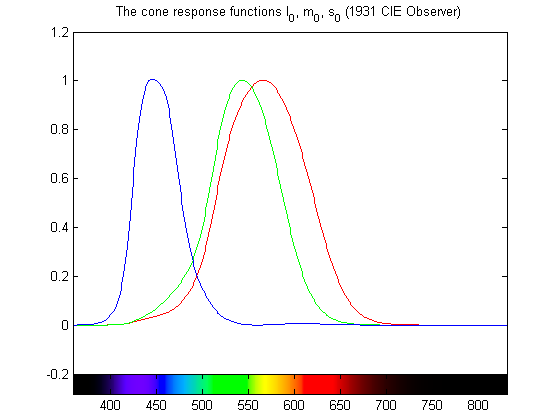

Consider the diagram below. It illustrates two scenes. In the first scene, on the left, a hypothetical blue and black dress is illuminated by one source. In the second scene, on the right, a hypothetical white and gold dress is illuminated by a second source. By coincidence, both scenes produce the same light at the camera, and therefore the two photographs look the same.

Note: I tweaked the diagram above at 02-Mar-2015 14:50 UTC to clarify its interpretation.

For some people, their visual system is jumping to illumination 1, leading them to see a blue and black dress. For others, their visual system is jumping to illumination 2, leading them to see a white and gold dress.

On Friday afternoon, I heard a report that many people in the white and gold camp, when they see a version of the photograph that includes a woman's face, immediately change their perception of the dress to blue and black. This perceptual shift persists even when they view the original photograph again. This demonstrates that the presence of an object with a familiar color can significantly alter our perception of colors throughout the scene.

If you zoom way in and examine the pixels on the dress in the original image, you'll see that they are blue.

So what kind of illumination scenario could cause people to perceive this as white? I asked Toshia McCabe, a MathWorks writer who knows more than I do about color and color systems. She thinks the dress picture was edited from another one in which the dress was underexposed. As a result, the "blue coincidentally looks like a white that is in shadow (but daylight balanced)." In other words, light from a white object in shadowed daylight can arrive as blue light to the camera. So if your eye sees blue pixels, but your brain jumps to the conclusion that the original scene was taken in shaded daylight, then your brain might decide you are looking at a white dress.

For the record, my wife sees a white and gold dress on a computer monitor, but she sees blue and brown when it is printed. I see blue and brown.

Enjoy!

コメント

コメントを残すには、ここ をクリックして MathWorks アカウントにサインインするか新しい MathWorks アカウントを作成します。