The bucket list

While we are talking about buckets, it makes sense for me to introduce you to another type of bucket. This one contains objects and allows retention of massive amounts of unstructured data.

It is 2019 and in this age of the cloud, there are many object storage systems available to users. In this post, I will focus on the services offered by one such storage system offered by Amazon. The Amazon S3™ (Simple Storage Service) uses the same scalable storage infrastructure that Amazon uses to run its platform.

MATLAB and Simulink developers can already use these services with our shipping R2018b/19a product as documented by leveraging the datastore function that allows easy read/write access of data stored in on S3 (among other forms of remote data).

Specialized forms of this datastore function allow users to work directly with images (ImageDatastore), files (FileDatastore), spreadsheets (SpreadsheetDatastore) or tabular text (TabularTextDatastore). Typically, once a user has configured MATLAB with the access key ID, secret access key and region, the datastore will provide users an abstraction to the data pointed at by the internationalized resource identifier (IRI).

Abstraction is nice, but developers require finer control of what their code does with data for a good reason. They require to declare and attach permissions, control the encryption of the data, define who can and cannot access the data and exert their rights to create, read, update and delete (CRUD) content. Many of these actions are required to satisfy a variety of business, legal and technical requirements far beyond that of mere analysis. Additionally, developers can extend the tooling and use it to debug their data access workflows.

At this point, with no further ado, permit me to introduce you to an open source *prototype* MATLAB client released on github.com to allow MATLAB developers to use Amazon S3. The easiest way to get started is to clone this repository and all required dependencies using:

git clone --recursive https://github.com/mathworks-ref-arch/mathworks-aws-support.git

The repository contains MATLAB code that leverages the AWS SDK for Java. This package uses certain third-party content which is licensed under separate license agreements. See the pom.xml file for third-party software downloaded at build time.

Build the underlying Java artifactsYou can build the underlying Java SDK using Maven and the process is straightforward:

$ cd matlab-aws-s3/Software/Java/

$ mvn clean package

On a successful build, a JAR archive is packaged and is made available to MATLAB by running the startup.m file

>> cd matlab-aws-s3/Software/MATLAB

>> startup

Authenticate against the S3 service

You can do this in a number of ways including the use of the Amazon CLI (which you can call directly from MATLAB), token service (STS for time-limited or multi-factor based authentication), environment variables, etc. For the purposes of demonstration, and to keep it simple I will use a static file on the MATLAB path, with credentials from the Amazon Identity and Access Management (IAM) service:

{

"aws_access_key_id": "REDACTED",

"secret_access_key" : "REDACTED",

"region" : "us-west-1"

}

Create a bucket

To create a bucket, you can use the API interface:

% Create the client

s3 = aws.s3.Client()

s3.initialize();

% Create a bucket, note AWS provides naming guidelines

bucketName = 'com-example-mybucket';

s3.createBucket(bucketName);

List existing buckets

You can list all existing buckets:

% Get a list of the buckets

bucketList = s3.listBuckets();

bucketList =

CreationDate Name Owner OwnerId

______________________________ __________________________ _______________ _____________

'Thu Mar 02 02:13:19 GMT 2018' 'com-example-mybucket' 'aws_test_dept' '[REDACTED]'

'Thu Jun 08 18:46:37 BST 2018' 'com-example-my-test-bucket' 'aws_test_dept' '[REDACTED]'

I did warn you that this post was about bucket lists!

Storing dataStoring data from MATLAB into cloud based buckets on Amazon S3 becomes simple.

% Upload a file

% Create some random data

x = rand(100,100);

% Save the data to a file

uploadfile = [tempname,'.mat'];

save(uploadfile, 'x');

% Put the .MAT file into an S3 object called 'myobjectkey' in the bucket

s3.putObject(bucketName, uploadfile, 'myobjectkey');

Fetching data

Similarly, you can pull objects down into MATLAB from S3 buckets as follows.

% Download a file

s3.getObject(bucketName,'myobjectkey','download.mat');

Clean up

You get the idea. The MATLAB interface allows you to exert control of how data is persisted on cloud object storage on Amazon S3. You data can be similarly deleted and the bucket can be cleaned up.

s3.deleteBucket(bucketName);

Control, control, control the access

With the interface, you can now exert finer control on everything from who gets to access the data and what rights they have to read/write.

% Create a canned ACL object in this case AuthenticatedRead and apply it

% CAUTION granting permissions to AuthenticatedUsers will apply the permission

% anyone with an AWS account and so this bucket will become readable to the wider Internet

myCannedACL = aws.s3.CannedAccessControlList('AuthenticatedRead');

s3.setBucketAcl(myBucket,myCannedACL);

Implications

- The MATLAB tooling builds on top of the SDK for Java released by Amazon. The SDK itself is supported by many teams of active developers and updates frequently with new features and fixes. To give you a sense of how quickly these SDK's evolve, there were a total of 1,508 files that have changed and with 215,128 additions and 79,774 deletions in a month. The implication of releasing the MATLAB tooling to GitHub is that now MATLAB users can keep pace with these rapid developments by rebuilding the SDK and using the latest versions, with MATLAB.

- Object storage systems offer great value in cheap storage coupled with high durability (99.99999999999% i.e. 11 9's). In turn, the storage costs associated with a project begin to plummet and this movement has triggered users to stop asking what to capture and store. The conversation now centers around how do we analyze all this data, given that it is logical to capture everything and store it easily. That in turn, leads us to Big Data (an oft overused and hyped buzzword). A topic for another day.

- The API presented to a MATLAB user is as identical as possible to the underlying API offered to Java developers. By maintaining this fidelity, MATLAB users can leverage the vast resources offered by Amazon and distilled knowledge in locations like StackOverflow to directly apply those techniques to their MATLAB workflows.

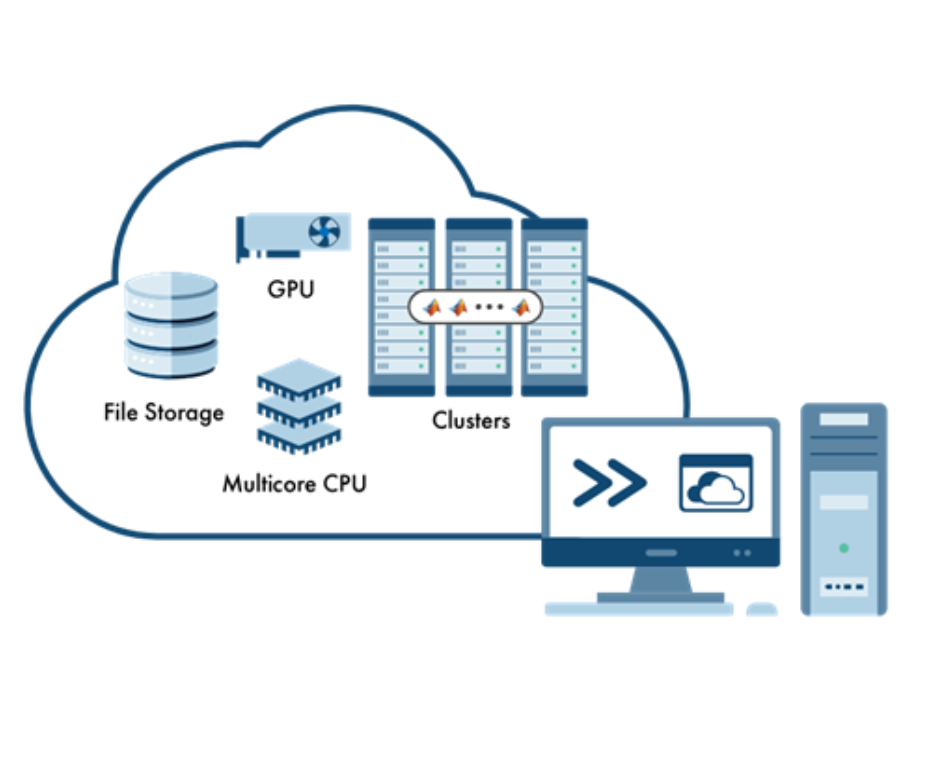

In closing, interfaces such as the one discussed in this post, make it easy for you, the developer to leverage the promise of practically unlimited cloud-based storage to supercharge your workflows within your existing and familiar MATLAB and Simulink tools. And most importantly, none of this prevents you from using the high-level interfaces in the same code, where it is more appropriate, giving you a wider choice of approaches.

So, do you use cloud object storage? As always, we value your thoughts, feedback and comments.

- Category:

- Big Picture,

- Cloud,

- GitHub,

- Third Party Integration

Comments

To leave a comment, please click here to sign in to your MathWorks Account or create a new one.