Performance Review Criteria 2: Sticks to Solid Principles

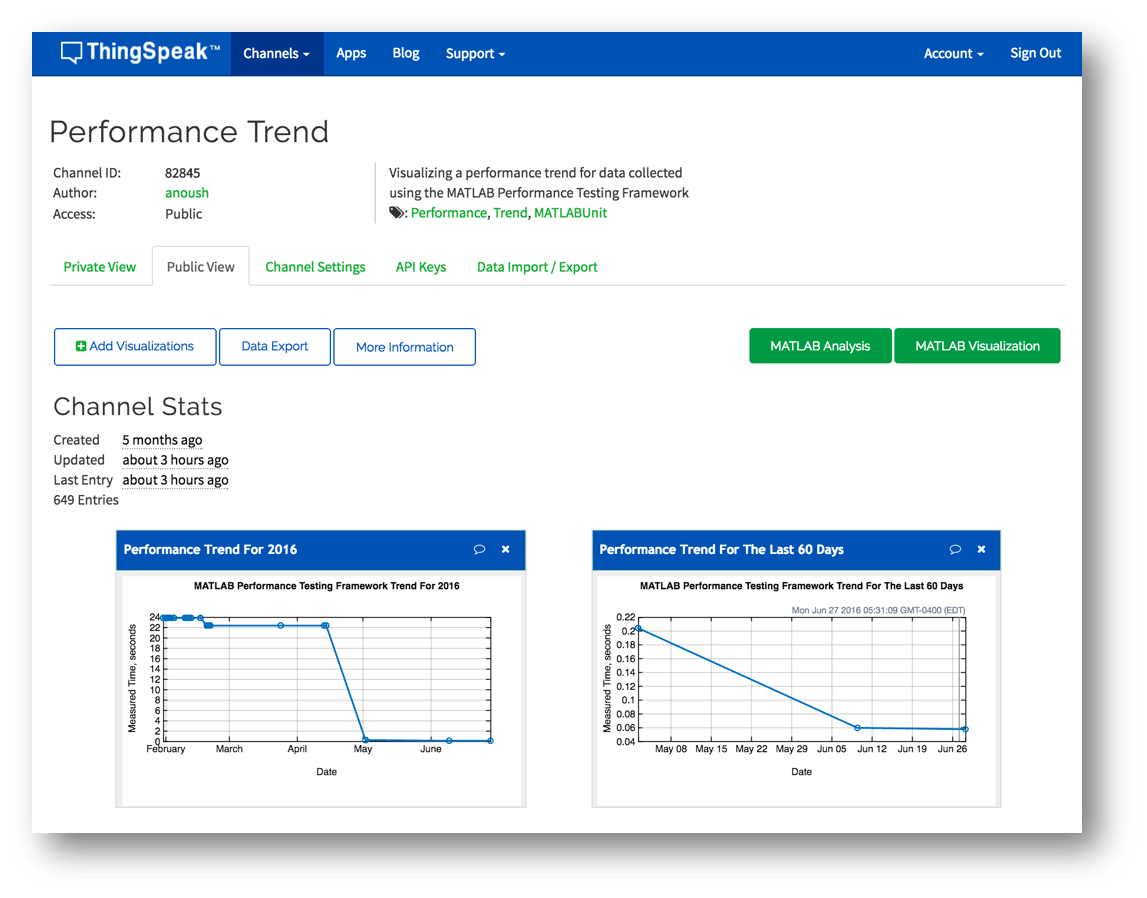

We saw last time how we can use the performance testing framework to easily see the runtime differences between multiple competing algorithms. This can be done without needing to learn about the philosophies and guiding principles of the framework, indeed you don't even need to know that you are using a performance test framework or that the script you are writing is actually even considered a "test".

However (believe you me!), those principles and philosophies are there to be sure. I'd like to walk through some of these principles so that we can all be on the same page and have a good understanding of the design points behind the new framework.

Contents

Principle 1: Focus on Precision, Accuracy, and Robustness

Perhaps this should go without saying. You are building production grade software here and we have a production grade framework to match. The first principle and underlying goal is that the performance measurement is as precise and accurate as possible.

This means, for example, that we include features such as "warming up" the code by executing the code first a few times so that initialization effects don't negatively impact the measurement. This allows a better idea of the typical execution time of the code. Features exist to measure the first time performance as well. Also, the measurement boundary is as tightly scoped as possible in order to just measure the relevant code. For example look at the following simple performance test:

function tests = MyFirstMagicSquarePerfTest tests = functiontests(localfunctions); function setup(~) % Do something that takes half a second. pause(.5); function testCreatingMagicSquare(~) % Measure the performance of creating a large(ish) magic square. magic(1000);

Here I have added a pause to ensure that the TestMethodSetup takes much longer than the code we'd like to measure. However, we can see when we measure its execution time with runperf that this content is not included in the measurement:

measResult = runperf('MyFirstMagicSquarePerfTest');

samples = measResult.Samples

Running MyFirstMagicSquarePerfTest

........

Done MyFirstMagicSquarePerfTest

__________

samples =

Name MeasuredTime Timestamp Host Platform Version RunIdentifier

__________________________________________________ ____________ ____________________ _____________ ________ _____________________ ____________________________________

MyFirstMagicSquarePerfTest/testCreatingMagicSquare 0.0050525 06-May-2016 17:31:56 MyMachineName maci64 9.0.0.341360 (R2016a) b96e12cd-18cf-4f1f-a03e-a0e4e7da8f2d

MyFirstMagicSquarePerfTest/testCreatingMagicSquare 0.0048567 06-May-2016 17:31:56 MyMachineName maci64 9.0.0.341360 (R2016a) b96e12cd-18cf-4f1f-a03e-a0e4e7da8f2d

MyFirstMagicSquarePerfTest/testCreatingMagicSquare 0.0050932 06-May-2016 17:31:57 MyMachineName maci64 9.0.0.341360 (R2016a) b96e12cd-18cf-4f1f-a03e-a0e4e7da8f2d

MyFirstMagicSquarePerfTest/testCreatingMagicSquare 0.0047803 06-May-2016 17:31:57 MyMachineName maci64 9.0.0.341360 (R2016a) b96e12cd-18cf-4f1f-a03e-a0e4e7da8f2d

You can see we ran the test multiple times and each time stayed true to running the test correctly by running the code in setup to provide the fresh fixture. However, you'll notice the half second is not included in the measurement. This is because there is a specific measurement boundary. In functions and scripts this boundary is simply the test boundary (test function or test cell). In classes this boundary is the test method boundary by default but can also be directly specified. This is useful for the following test, which poses a problem:

classdef MySecondMagicSquarePerfTest < matlab.perftest.TestCase methods(Test) function testCreatingMagicSquare(~) % What about some work I need to do in this method? pause(0.5); % Measure the performance of creating a large(ish) magic square. magic(1000); end end end

measResult = runperf('MySecondMagicSquarePerfTest');

samples = measResult.Samples

Running MySecondMagicSquarePerfTest

........

Done MySecondMagicSquarePerfTest

__________

samples =

Name MeasuredTime Timestamp Host Platform Version RunIdentifier

___________________________________________________ ____________ ____________________ _____________ ________ _____________________ ____________________________________

MySecondMagicSquarePerfTest/testCreatingMagicSquare 0.50935 06-May-2016 17:32:00 MyMachineName maci64 9.0.0.341360 (R2016a) 99746631-7a34-482b-86b7-94e914ae2649

MySecondMagicSquarePerfTest/testCreatingMagicSquare 0.50829 06-May-2016 17:32:00 MyMachineName maci64 9.0.0.341360 (R2016a) 99746631-7a34-482b-86b7-94e914ae2649

MySecondMagicSquarePerfTest/testCreatingMagicSquare 0.50571 06-May-2016 17:32:01 MyMachineName maci64 9.0.0.341360 (R2016a) 99746631-7a34-482b-86b7-94e914ae2649

MySecondMagicSquarePerfTest/testCreatingMagicSquare 0.5083 06-May-2016 17:32:01 MyMachineName maci64 9.0.0.341360 (R2016a) 99746631-7a34-482b-86b7-94e914ae2649

Unfortunately you can see the method boundary includes some extra work and this prevents us from gathering our intended measurement. Never fear however, we've got ya taken care of. In this case you can specifically scope your measurement boundary to exactly the code you'd like to measure in the test method by using the startMeasuring and stopMeasuring methods to explicitly define your boundary:

classdef MyThirdMagicSquarePerfTest < matlab.perftest.TestCase methods(Test) function testCreatingMagicSquare(testCase) % What about some work I need to do in this method? pause(0.5); % Measure the performance of creating a large(ish) magic square. testCase.startMeasuring; magic(1000); testCase.stopMeasuring; end end end

measResult = runperf('MyThirdMagicSquarePerfTest');

samples = measResult.Samples

Running MyThirdMagicSquarePerfTest

..........

..........

..........

..

Done MyThirdMagicSquarePerfTest

__________

samples =

Name MeasuredTime Timestamp Host Platform Version RunIdentifier

__________________________________________________ ____________ ____________________ _____________ ________ _____________________ ____________________________________

MyThirdMagicSquarePerfTest/testCreatingMagicSquare 0.005019 06-May-2016 17:32:04 MyMachineName maci64 9.0.0.341360 (R2016a) 7f8e4608-c8f6-4444-a4b5-4a4c8b2139e8

MyThirdMagicSquarePerfTest/testCreatingMagicSquare 0.0091478 06-May-2016 17:32:05 MyMachineName maci64 9.0.0.341360 (R2016a) 7f8e4608-c8f6-4444-a4b5-4a4c8b2139e8

MyThirdMagicSquarePerfTest/testCreatingMagicSquare 0.0051195 06-May-2016 17:32:05 MyMachineName maci64 9.0.0.341360 (R2016a) 7f8e4608-c8f6-4444-a4b5-4a4c8b2139e8

MyThirdMagicSquarePerfTest/testCreatingMagicSquare 0.0049833 06-May-2016 17:32:06 MyMachineName maci64 9.0.0.341360 (R2016a) 7f8e4608-c8f6-4444-a4b5-4a4c8b2139e8

MyThirdMagicSquarePerfTest/testCreatingMagicSquare 0.0050415 06-May-2016 17:32:06 MyMachineName maci64 9.0.0.341360 (R2016a) 7f8e4608-c8f6-4444-a4b5-4a4c8b2139e8

MyThirdMagicSquarePerfTest/testCreatingMagicSquare 0.0050852 06-May-2016 17:32:07 MyMachineName maci64 9.0.0.341360 (R2016a) 7f8e4608-c8f6-4444-a4b5-4a4c8b2139e8

MyThirdMagicSquarePerfTest/testCreatingMagicSquare 0.0050112 06-May-2016 17:32:07 MyMachineName maci64 9.0.0.341360 (R2016a) 7f8e4608-c8f6-4444-a4b5-4a4c8b2139e8

MyThirdMagicSquarePerfTest/testCreatingMagicSquare 0.0051059 06-May-2016 17:32:08 MyMachineName maci64 9.0.0.341360 (R2016a) 7f8e4608-c8f6-4444-a4b5-4a4c8b2139e8

MyThirdMagicSquarePerfTest/testCreatingMagicSquare 0.0048692 06-May-2016 17:32:08 MyMachineName maci64 9.0.0.341360 (R2016a) 7f8e4608-c8f6-4444-a4b5-4a4c8b2139e8

MyThirdMagicSquarePerfTest/testCreatingMagicSquare 0.0051603 06-May-2016 17:32:09 MyMachineName maci64 9.0.0.341360 (R2016a) 7f8e4608-c8f6-4444-a4b5-4a4c8b2139e8

MyThirdMagicSquarePerfTest/testCreatingMagicSquare 0.004889 06-May-2016 17:32:10 MyMachineName maci64 9.0.0.341360 (R2016a) 7f8e4608-c8f6-4444-a4b5-4a4c8b2139e8

MyThirdMagicSquarePerfTest/testCreatingMagicSquare 0.004917 06-May-2016 17:32:10 MyMachineName maci64 9.0.0.341360 (R2016a) 7f8e4608-c8f6-4444-a4b5-4a4c8b2139e8

MyThirdMagicSquarePerfTest/testCreatingMagicSquare 0.0052781 06-May-2016 17:32:11 MyMachineName maci64 9.0.0.341360 (R2016a) 7f8e4608-c8f6-4444-a4b5-4a4c8b2139e8

MyThirdMagicSquarePerfTest/testCreatingMagicSquare 0.0049215 06-May-2016 17:32:11 MyMachineName maci64 9.0.0.341360 (R2016a) 7f8e4608-c8f6-4444-a4b5-4a4c8b2139e8

MyThirdMagicSquarePerfTest/testCreatingMagicSquare 0.0049284 06-May-2016 17:32:12 MyMachineName maci64 9.0.0.341360 (R2016a) 7f8e4608-c8f6-4444-a4b5-4a4c8b2139e8

MyThirdMagicSquarePerfTest/testCreatingMagicSquare 0.0048642 06-May-2016 17:32:12 MyMachineName maci64 9.0.0.341360 (R2016a) 7f8e4608-c8f6-4444-a4b5-4a4c8b2139e8

MyThirdMagicSquarePerfTest/testCreatingMagicSquare 0.0050289 06-May-2016 17:32:13 MyMachineName maci64 9.0.0.341360 (R2016a) 7f8e4608-c8f6-4444-a4b5-4a4c8b2139e8

MyThirdMagicSquarePerfTest/testCreatingMagicSquare 0.0048802 06-May-2016 17:32:13 MyMachineName maci64 9.0.0.341360 (R2016a) 7f8e4608-c8f6-4444-a4b5-4a4c8b2139e8

MyThirdMagicSquarePerfTest/testCreatingMagicSquare 0.005147 06-May-2016 17:32:14 MyMachineName maci64 9.0.0.341360 (R2016a) 7f8e4608-c8f6-4444-a4b5-4a4c8b2139e8

MyThirdMagicSquarePerfTest/testCreatingMagicSquare 0.0050095 06-May-2016 17:32:14 MyMachineName maci64 9.0.0.341360 (R2016a) 7f8e4608-c8f6-4444-a4b5-4a4c8b2139e8

MyThirdMagicSquarePerfTest/testCreatingMagicSquare 0.005138 06-May-2016 17:32:15 MyMachineName maci64 9.0.0.341360 (R2016a) 7f8e4608-c8f6-4444-a4b5-4a4c8b2139e8

MyThirdMagicSquarePerfTest/testCreatingMagicSquare 0.0050985 06-May-2016 17:32:15 MyMachineName maci64 9.0.0.341360 (R2016a) 7f8e4608-c8f6-4444-a4b5-4a4c8b2139e8

MyThirdMagicSquarePerfTest/testCreatingMagicSquare 0.0051649 06-May-2016 17:32:16 MyMachineName maci64 9.0.0.341360 (R2016a) 7f8e4608-c8f6-4444-a4b5-4a4c8b2139e8

MyThirdMagicSquarePerfTest/testCreatingMagicSquare 0.0050265 06-May-2016 17:32:16 MyMachineName maci64 9.0.0.341360 (R2016a) 7f8e4608-c8f6-4444-a4b5-4a4c8b2139e8

MyThirdMagicSquarePerfTest/testCreatingMagicSquare 0.0048852 06-May-2016 17:32:17 MyMachineName maci64 9.0.0.341360 (R2016a) 7f8e4608-c8f6-4444-a4b5-4a4c8b2139e8

MyThirdMagicSquarePerfTest/testCreatingMagicSquare 0.0051176 06-May-2016 17:32:17 MyMachineName maci64 9.0.0.341360 (R2016a) 7f8e4608-c8f6-4444-a4b5-4a4c8b2139e8

MyThirdMagicSquarePerfTest/testCreatingMagicSquare 0.0049506 06-May-2016 17:32:18 MyMachineName maci64 9.0.0.341360 (R2016a) 7f8e4608-c8f6-4444-a4b5-4a4c8b2139e8

MyThirdMagicSquarePerfTest/testCreatingMagicSquare 0.0051294 06-May-2016 17:32:18 MyMachineName maci64 9.0.0.341360 (R2016a) 7f8e4608-c8f6-4444-a4b5-4a4c8b2139e8

Voila! Boundary scoped appropriately.

However, even with the proper tight measurement scoping there still is some overhead of the framework such as function call overhead and other overhead involved in actually executing the code and taking the measurement. This is where another feature of the framework comes in. The framework calibrates (or "tares" if you want to think of it that way) the measurement by actually measuring an empty test. This gives us a concrete measurement of the overhead involved and this overhead benefits us in two ways. Firstly, we can actually subtract off the measurement overhead to get a more accurate result. Secondly, this calibration measurement gives us an idea of the framework precision, allowing us to alert you to the fact that you are trying to measure something within the framework precision and that you shouldn't trust the results. While this may seem limiting when encountered, it actually is an important feature that prevents you from interpreting the result incorrectly. For example, let's look at a performance test you should never implement:

classdef BadPerfTest < matlab.perftest.TestCase methods(Test) function pleaseDoNotTryToMeasureOnePlusOne(~) % One plus one is too fast to measure. Rather than giving you a % bunk result we will alert you and the result will be invalid. 1+1; end end end

measResult = runperf('BadPerfTest');

samples = measResult.Samples

Running BadPerfTest

..

================================================================================

BadPerfTest/pleaseDoNotTryToMeasureOnePlusOne was filtered.

Test Diagnostic: The MeasuredTime should not be too close to the precision of the framework.

================================================================================

Done BadPerfTest

__________

Failure Summary:

Name Failed Incomplete Reason(s)

============================================================================================

BadPerfTest/pleaseDoNotTryToMeasureOnePlusOne X Filtered by assumption.

samples =

empty 0-by-7 table

We don't even let you run it. The result you get back is invalid. To see what we would be trying to measure we have to do this manually with the poor man's version:

results = zeros(1,1e6); for idx=1:1e6 t = tic; 1+1; results(idx) = toc(t); end ax = axes; plot(ax, results) ax.YLim(2) = 3*std(results); % Cap the y limit to 3 standard deviations title(ax, 'The Garbage Results When Trying to Measure 1+1'); ylabel(ax, 'Execution time up to 3 standard deviations.');

Garbage. Noise. Not what you want the framework to hand back, so we don't. We recognize a low confidence result and we let you know.

Principle 2: A Performance Test is a Test

The next principle is that a performance test is simply a test. It is a test that uses the familiar script, function, and class based testing APIs, and as such can be run as normal tests to validate that the software is not broken. However, the same test content can also be run differently in order to measure the performance of the measured code. The behavior is driven by how it is run, not what is in the test!

If you have already used the unit test framework, there is remarkably little you need to learn when diving into performance testing! Please use the extra mental energy toward putting mankind on mars or something amazing like that.

This also means that your performance tests can be checked into your CI system and will fail the build if a change somehow breaks the performance tests.

Look, here's the deal, I can make all my code go really fast if it doesn't have to do the right thing. To prevent this, we can put a spot check in to ensure the software is not only fast but also correct. Let's see this in action with another magic square test:

classdef MyFourthMagicSquarePerfTest < matlab.perftest.TestCase methods(Test) function createMagicSquare(testCase) import matlab.unittest.constraints.IsEqualTo; import matlab.unittest.constraints.EveryElementOf; testCase.startMeasuring; m = magic(100); testCase.stopMeasuring; columnSum = sum(m); rowSum = sum(m,2); testCase.verifyThat(EveryElementOf(columnSum), IsEqualTo(columnSum(1)), ... 'All the values of column sum should be equal.'); testCase.verifyThat(rowSum, IsEqualTo(columnSum), ... 'The column sum should equal the transpose of the row sum.'); end end end

Now let's run it using runtests instead of runperf :

result = runtests('MyFourthMagicSquarePerfTest')

Running MyFourthMagicSquarePerfTest

================================================================================

Verification failed in MyFourthMagicSquarePerfTest/createMagicSquare.

----------------

Test Diagnostic:

----------------

The column sum should equal the transpose of the row sum.

---------------------

Framework Diagnostic:

---------------------

IsEqualTo failed.

--> NumericComparator failed.

--> Size check failed.

--> Sizes do not match.

Actual size:

100 1

Expected size:

1 100

Actual double:

100x1 double

Expected double:

1x100 double

------------------

Stack Information:

------------------

In /mathworks/inside/files/dev/tools/tia/mdd/performancePrinciples/MyFourthMagicSquarePerfTest.m (MyFourthMagicSquarePerfTest.createMagicSquare) at 18

================================================================================

.

Done MyFourthMagicSquarePerfTest

__________

Failure Summary:

Name Failed Incomplete Reason(s)

============================================================================================

MyFourthMagicSquarePerfTest/createMagicSquare X Failed by verification.

result =

TestResult with properties:

Name: 'MyFourthMagicSquarePerfTest/createMagicSquare'

Passed: 0

Failed: 1

Incomplete: 0

Duration: 0.0238

Details: [1x1 struct]

Totals:

0 Passed, 1 Failed, 0 Incomplete.

0.023783 seconds testing time.

Look at that, we can run the performance test like a functional regressions test and it fails! In this case the problem is that we should be verifying that the row sum is the transpose of the column sum but the test is comparing them directly. Here the test itself is the source of the problem not the code under test, but bugs in source code would fail it just the same.

Principle 3: Report Don't Qualify

With the example above we ran this test using runtests to ensure it was correct. Note, that the result was indeed binary. The test fails or passes. In this case it failed and you can throw that back to where it came from and fix it. However, when measuring performance we don't have such luxury. When observing performance we are running an experiment and gathering a measurement and we need some interpretation of the results to determine whether it is good or bad. Often times this interpretation is only possible in some context, such as whether the code was faster or slower than last check-in, or last month. This difference in how the results are to be interpreted affects the resulting output data structure. Let's take a look using the same buggy test but running it as a performance test instead:

measResult = runperf('MyFourthMagicSquarePerfTest')

Running MyFourthMagicSquarePerfTest

================================================================================

Verification failed in MyFourthMagicSquarePerfTest/createMagicSquare.

----------------

Test Diagnostic:

----------------

The column sum should equal the transpose of the row sum.

---------------------

Framework Diagnostic:

---------------------

IsEqualTo failed.

--> NumericComparator failed.

--> Size check failed.

--> Sizes do not match.

Actual size:

100 1

Expected size:

1 100

Actual double:

100x1 double

Expected double:

1x100 double

------------------

Stack Information:

------------------

In /mathworks/inside/files/dev/tools/tia/mdd/performancePrinciples/MyFourthMagicSquarePerfTest.m (MyFourthMagicSquarePerfTest.createMagicSquare) at 18

================================================================================

.

================================================================================

MyFourthMagicSquarePerfTest/createMagicSquare was filtered.

Test Diagnostic: The MeasuredTime should not be too close to the precision of the framework.

================================================================================

Done MyFourthMagicSquarePerfTest

__________

Failure Summary:

Name Failed Incomplete Reason(s)

============================================================================================

MyFourthMagicSquarePerfTest/createMagicSquare X X Filtered by assumption.

Failed by verification.

measResult =

MeasurementResult with properties:

Name: 'MyFourthMagicSquarePerfTest/createMagicSquare'

Valid: 0

Samples: [0x7 table]

TestActivity: [1x12 table]

Totals:

0 Valid, 1 Invalid.

Note the absence of a Passed/Failed bit on the resulting data structure. Even for this test which is clearly problematic, we are in the context of performance testing and the focus is on measurement and reporting rather than qualification. What we do say is whether the measurement result is Valid or not. The valid property is simply a logical property that specifies whether you even want to begin analyzing the measurement results. The test failed? Invalid. The test was filtered? Invalid. In order to be valid the test needs to complete and pass. That is the deterministic state that we can trust provides us with the correct performance measurement.

Let's fix the bug and look a bit more:

classdef MyFifthMagicSquarePerfTest < matlab.perftest.TestCase methods(Test) function createMagicSquare(testCase) import matlab.unittest.constraints.IsEqualTo; import matlab.unittest.constraints.EveryElementOf; testCase.startMeasuring; m = magic(1000); testCase.stopMeasuring; columnSum = sum(m); rowSum = sum(m,2); testCase.verifyThat(EveryElementOf(columnSum), IsEqualTo(columnSum(1)), ... 'All the values of column sum should be equal.'); testCase.verifyThat(rowSum, IsEqualTo(columnSum.'), ... 'The column sum should equal the transpose of the row sum.'); end end end

measResult = runperf('MyFifthMagicSquarePerfTest')

Running MyFifthMagicSquarePerfTest

........

Done MyFifthMagicSquarePerfTest

__________

measResult =

MeasurementResult with properties:

Name: 'MyFifthMagicSquarePerfTest/createMagicSquare'

Valid: 1

Samples: [4x7 table]

TestActivity: [8x12 table]

Totals:

1 Valid, 0 Invalid.

Alright, we have a valid result. Now we can look at the Samples to see that everything is working normally:

measResult.Samples

ans =

Name MeasuredTime Timestamp Host Platform Version RunIdentifier

____________________________________________ ____________ ____________________ _____________ ________ _____________________ ____________________________________

MyFifthMagicSquarePerfTest/createMagicSquare 0.0049167 06-May-2016 17:32:21 MyMachineName maci64 9.0.0.341360 (R2016a) b7b651ff-1c19-40a4-b323-e5afd9b0d77b

MyFifthMagicSquarePerfTest/createMagicSquare 0.0049236 06-May-2016 17:32:21 MyMachineName maci64 9.0.0.341360 (R2016a) b7b651ff-1c19-40a4-b323-e5afd9b0d77b

MyFifthMagicSquarePerfTest/createMagicSquare 0.0049038 06-May-2016 17:32:21 MyMachineName maci64 9.0.0.341360 (R2016a) b7b651ff-1c19-40a4-b323-e5afd9b0d77b

MyFifthMagicSquarePerfTest/createMagicSquare 0.004911 06-May-2016 17:32:21 MyMachineName maci64 9.0.0.341360 (R2016a) b7b651ff-1c19-40a4-b323-e5afd9b0d77b

...and now we can apply whatever statistic we are interested in on the resulting data:

median(measResult.Samples.MeasuredTime)

ans =

0.0049

Note that you can also see all of the information, including the warmup runs and the actual TestResult data by looking at the TestActivity table.

measResult.TestActivity

ans =

Name Passed Failed Incomplete MeasuredTime Objective Timestamp Host Platform Version TestResult RunIdentifier

____________________________________________ ______ ______ __________ ____________ _________ ____________________ _____________ ________ _____________________ ________________________________ ____________________________________

MyFifthMagicSquarePerfTest/createMagicSquare true false false 0.0048825 warmup 06-May-2016 17:32:20 MyMachineName maci64 9.0.0.341360 (R2016a) [1x1 matlab.unittest.TestResult] b7b651ff-1c19-40a4-b323-e5afd9b0d77b

MyFifthMagicSquarePerfTest/createMagicSquare true false false 0.0050761 warmup 06-May-2016 17:32:20 MyMachineName maci64 9.0.0.341360 (R2016a) [1x1 matlab.unittest.TestResult] b7b651ff-1c19-40a4-b323-e5afd9b0d77b

MyFifthMagicSquarePerfTest/createMagicSquare true false false 0.0049342 warmup 06-May-2016 17:32:20 MyMachineName maci64 9.0.0.341360 (R2016a) [1x1 matlab.unittest.TestResult] b7b651ff-1c19-40a4-b323-e5afd9b0d77b

MyFifthMagicSquarePerfTest/createMagicSquare true false false 0.0050149 warmup 06-May-2016 17:32:21 MyMachineName maci64 9.0.0.341360 (R2016a) [1x1 matlab.unittest.TestResult] b7b651ff-1c19-40a4-b323-e5afd9b0d77b

MyFifthMagicSquarePerfTest/createMagicSquare true false false 0.0049167 sample 06-May-2016 17:32:21 MyMachineName maci64 9.0.0.341360 (R2016a) [1x1 matlab.unittest.TestResult] b7b651ff-1c19-40a4-b323-e5afd9b0d77b

MyFifthMagicSquarePerfTest/createMagicSquare true false false 0.0049236 sample 06-May-2016 17:32:21 MyMachineName maci64 9.0.0.341360 (R2016a) [1x1 matlab.unittest.TestResult] b7b651ff-1c19-40a4-b323-e5afd9b0d77b

MyFifthMagicSquarePerfTest/createMagicSquare true false false 0.0049038 sample 06-May-2016 17:32:21 MyMachineName maci64 9.0.0.341360 (R2016a) [1x1 matlab.unittest.TestResult] b7b651ff-1c19-40a4-b323-e5afd9b0d77b

MyFifthMagicSquarePerfTest/createMagicSquare true false false 0.004911 sample 06-May-2016 17:32:21 MyMachineName maci64 9.0.0.341360 (R2016a) [1x1 matlab.unittest.TestResult] b7b651ff-1c19-40a4-b323-e5afd9b0d77b

Principle 4: Multiple Observations

If you've made it this far I think you can already see that each test is run multiple times and multiple measurements were taken. Actually, a key piece of the technology that enabled the performance framework was extending the underlying test framework to support running each test repeatedly.

Repeated measurements are important of course because we are taking measurements in the presence of noise, and it follows that we need to measure a representative sample in order to arrive at the distribution and/or a reasonable deduction as to the performance of the code. The principle here is that we measure multiple executions and provide you with the data you need to analyze the behavior. Whether you use classical statistical measures, Bayesian approaches, or something else, the resulting data set is at your command.

Your principles

So that's a solid overview of the principles and philosophies of the new MATLAB performance framework. Do you have any other principles you have encountered when measuring the performance of your code?

- Category:

- Performance,

- Testing

Comments

To leave a comment, please click here to sign in to your MathWorks Account or create a new one.