A unique robot takes home big prize in the Amazon Robotics Challenge

Amazon is changing retail as we know it, and this change is built on efficiency. With more than 50 million items now eligible for free 2-day shipping through their Prime program, the pressure is on. The company is continually looking for ways to reduce the time from when the order is placed to when it’s delivered to the customer.

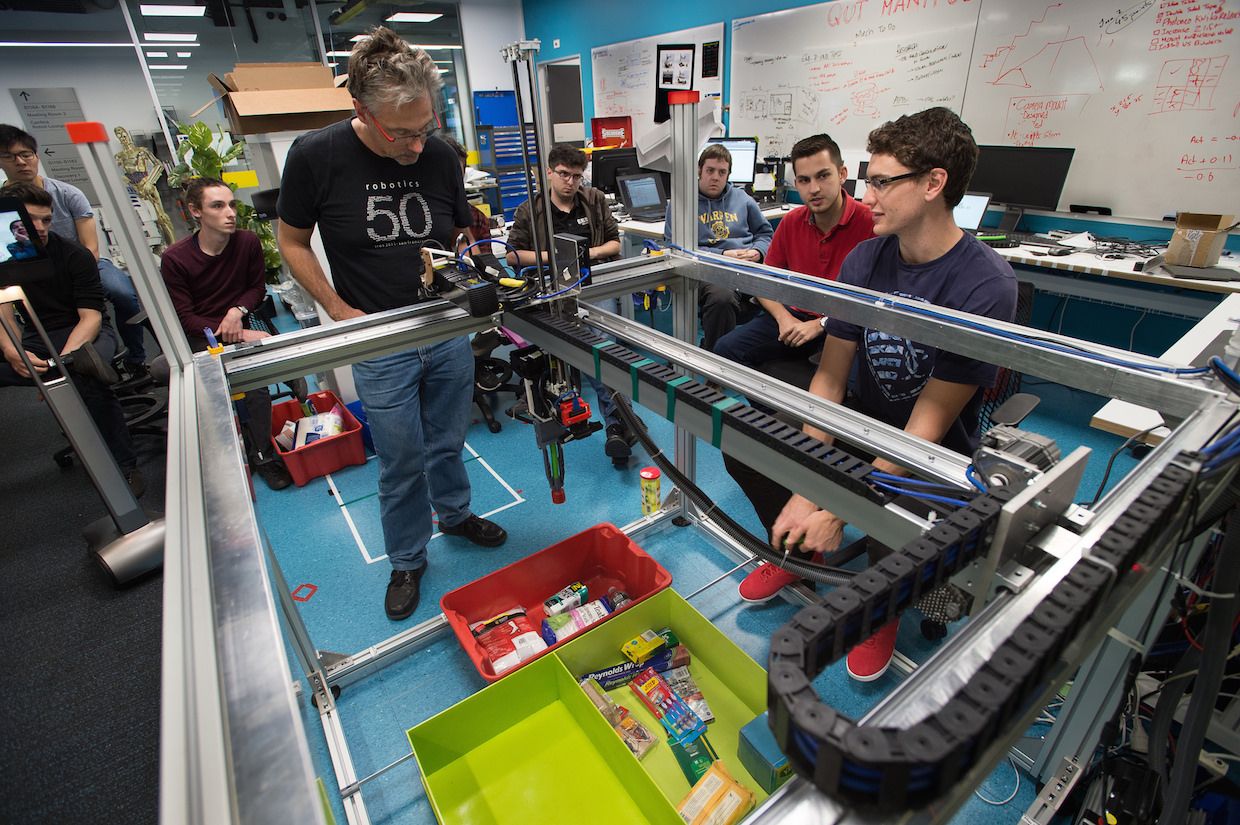

Robots at the Amazon Robotics research and production facility in North Reading, Massachusetts. Image credit: Ian MacLellan / The Seattle Times.

Technology is key to this quest, from Amazon’s first drone delivery earlier this year, to their 100,000 robots in their automated fulfillment centers. But there is one part of the process that has proven difficult to automate.

According to Bloomberg, “The company has a fleet of robots that drive around its facilities gathering items for orders. But it needs humans for the last step — picking up items of various shapes, then packing the right ones into the correct boxes for shipping. It’s a classic example of an activity that’s simple, almost mindless, for humans, but still unattainable for robots.”

Amazon Robotics Challenge

As one approach to solve this “pick and place” challenge, Amazon Robotics has sponsored a robotics competition for the past three years. Each team participating must design a robot that identifies objects, grasps them, and then safely packs them in boxes for shipment.

“Amazon’s automated warehouses are successful at removing much of the walking and searching for items within a warehouse. However, commercially viable automated picking in unstructured environments still remains a difficult challenge… In order to spur the advancement of these fundamental technologies, Amazon Robotics organizes the Amazon Robotics Challenge (ARC).”

The 2017 contest was designed to be more challenging than years past. This year, the competing robots couldn’t be “pre-programmed” with all the items they’d need to select from. Amazon Robotics gave the teams 40 objects to train their systems, and then replaced 20 of them with new items 45 minutes before the actual competition. To match the increase in difficulty, Amazon Robotics increased the total prize money to $250,000.

The task required computer vision-based algorithms to identify objects and plan the correct grasp. Bloomberg explained how the teams accomplished this feat, “They now use neural networks, a form of artificial intelligence that helps robots learn to recognize objects with less human programming.”

The teams had to teach the robots how to see a collection of objects and correctly select the item on the “shopping list”, pick it up and place it in the box. It’s not as easy as it sounds: picking up a soft item such as a teddy bear requires a much different grasp than picking up a book. And the robot needs to know what to do if the teddy bear is buried under the other objects in the collection.

The 2017 ARC champions: Australian Centre for Robotic Vision

Many teams entered industrial arm robots in the contest, adding grippers to the arms. The teams taught them to pick up objects and pack them much like a human would. However, the Australian Centre for Robotic Vision (ACRV) won the competition with a robot that was dramatically different than past winners. They replaced the robotic arm with a Cartesian coordinate robot that looked more like a claw arcade game than a typical industrial arm robot. Their robot, Cartman, used suction cups and a two-fingered claw to grasp and manipulate the items.

Cartman came in first in the final challenge which required the robot to first stow items, then to pick and pack selected items into boxes. The ARCV team, comprised of engineers from Queensland University of Technology (QUT), the University of Adelaide and the Australian National University, took home the $80,000 grand prize.

In addition to the highest score, Cartman was also the least expensive robot entered in the competition. Its final cost was under $24,000, significantly less expensive to build than most industrial robots. It was made of off-the-shelf products, and even made use of the engineer’s go-to construction aide: zip ties!

Cartman uses deep learning to ID items

“The first approach to the vision perception we tried was a two-stage approach: unsupervised segmentation followed by classification using deep features,” stated Trung Thanh Pham, ARC Postdoctoral Research Fellow at ACRV.

MATLAB and MatConvNet were used to test the idea. MatConvNet is a MATLAB toolbox used implement Convolutional Neural Networks (CNNs) for computer vision applications. The Image Processing Toolbox was used for I/O manipulations and visualizations, as well.

“Our final vision system used a deep CNN called RefineNet, which performs pixel-wise semantic segmentation,” added Douglas Morrison, Ph.D. researcher at QUT. “One of our system’s main advantages was RefineNet’s ability to provide accurate results with a small amount of training data. Our base system was first trained on ~200 images of the known items in cluttered environments (10-20 items per image). At competition time, our training approach was a trade-off between data collection and training time. We found that by adding 7 images of each new item in different poses was sufficient to consistently identify that item while still leaving sufficient training time.”

The data collection process was as automated to decrease the time required, placing the new items two at a time into an empty tote and using background subtraction to automatically generate a new labelled dataset of images. The team was able to collect all of the new data in approximately 5-7 minutes, leaving the remainder of the time for fine-tuning the network on the new data.

Cartman was the only robot to successfully complete the final stage

Cartman did suffer a setback in the second phase of the competition. Cartman had slipped to fifth place when it dropped an item after taking it out of the tote. But the systems overall ability to detect errors and adjust appropriately helped the team win the final phase. Throughout the competition, the team practiced continually and added improvements to the system.

“One such feature that we had added was the ability for the Cartman to search for items that he couldn’t see by moving other items out of the way and between parts of the storage system,” stated Morrison. “This feature ended up being crucial to our win in the finals task, as the final item was buried at the bottom of a storage bin, and all of the items on top had to be moved before it was visible. As a result, we were the only team to complete the pick phase of the finals task by placing all “ordered” items into the cardboard boxes.”

Congratulations ACRV!

Comments

To leave a comment, please click here to sign in to your MathWorks Account or create a new one.