Echolocation: helping the blind see with sound

Echolocation uses sound waves and echoes to determine where objects are located in space. It’s an ability shared by a few, select mammals such as bats, dolphins, and some whales. Did you know that humans are also capable of navigation via echolocation? Well, not all humans, but there are some incredibly gifted people that have mastered the ability to use echolocation to “see” their environment.

Daniel Kish, often called the real-life Batman, is known for his echolocation skills. He generates clicking noises with his mouth. When his clicks bounce off an object and reflect back to him, his brain translates the returning soundwave into spatial information. He can “see” buildings in the distance, and can describe the shape of an object in great detail, all by deciphering the reflected sound waves from his clicks.

Illustration of an acoustic pattern of mouth clicks for human echolocation. Image Credit: Thaler et al.

Kish is the president of World Access for the Blind, which focuses on helping blind individuals achieve greater independence. He teaches others the technique of echolocation. He’s also actively helping researchers better understand echolocation.

Determining the acoustics of human echolocation

A study, published in PLOS Computational Biology, examined the acoustic characteristics of the mouth clicks made by Kish and two other blind individuals with remarkable echolocation skills. The researchers from Durham University, U.K., and Birmingham University, U.K., set out to determine the acoustic mechanisms behind human echolocation.

The researchers recorded the clicks made by the three echolocation experts by measuring soundwaves in an echo-free room. The team placed tiny microphones, suspended by thin steel rods, at 10-degree intervals throughout the room. Both the microphones and the supporting rods had minimal physical profiles in order to reduce noise reflection from equipment. The goal was to record how the mouth clicks traveled through the space.

Robbie Gonzalez from Wired asked Kish what standing in the anechoic room was like. Kish replied, “the space sounded like standing before a wire fence, in the middle of an infinitely vast field of grass.”

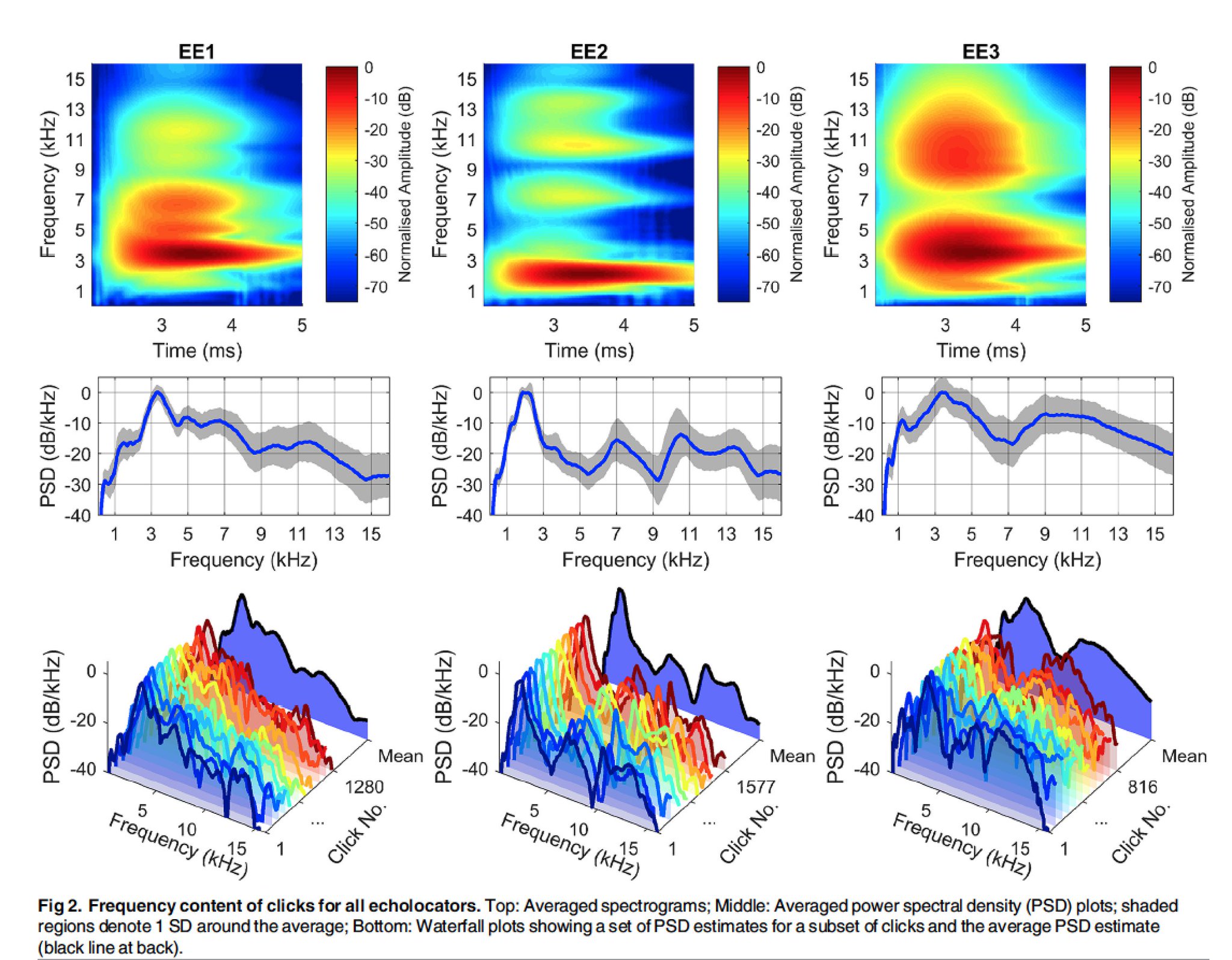

The researchers analyzed the sound waves with MATLAB and found that acoustic properties between the three echolocators’ clicks were quite similar. The clicks were characterized as “bright (a pair of high-pitched frequencies at around 3 and 10 kilohertz) and brief.” They tapered off into silence after 3 milliseconds and were concentrated in a narrower path than what is typical for human speech.

Analysis of the acoustic patterns of mouth clicks for human echolocation. Image Credit: PLOS, Computational Biology, Thaler et al

“One way to think about the beam pattern of mouth clicks is to consider it analogous to the way the light distributes from a flashlight,” Lore Thaler, lead author of the study, told ScienceAlert. “The beam pattern of the click in this way is the ‘shape of the acoustic flashlight’ that echolocators use.”

Helping the blind learn to use echolocation

According to Kish, it takes children two to four days to pick up echolocation. Adults take one or two days longer. He says, “Everyone has different levels of aptitude but everyone can learn to some level of ability that is useful, that makes moving around easier. I don’t think I’ve encountered any blind child or adult who simply couldn’t learn.”

But he has encountered some resistance in the blind community. Some people are uncomfortable with the attention drawn from making the clicking noises. “”In many instances, it’s discouraged,” states Kish. “I personally have worked with students who’ve come from schools for the blind, for whom clicking was actively discouraged.”

For those who are uncomfortable with generating the clicking noise, specially-designed devices could provide a more discrete way to utilize echolocation. The research team hopes this study will be helpful to build devices that could make echolocation more broadly achievable. The team has provided access to MATLAB code to synthesize the clicks here (downloadable ZIP file).

Echolocation in action

If you’d like to see human echolocation in action, watch this TED Talk by Daniel Kish, “How I use sonar to navigate the world.”

Dr. Tom Pey, chief executive of the Royal London Society for Blind People, told the BBC, “Not all of us will be able to do what Daniel does but he’s shown us that it is possible. I really tip my hat to him – long may he continue to be an inspiration for blind people.”

Comments

To leave a comment, please click here to sign in to your MathWorks Account or create a new one.