Bug Brain Beats Machine Learning

Living organisms have long provided inspiration for technology. Biomimicry of birds helped us design our first aircraft, while the structure of seed burs was copied for Velcro. Today, biomimicry is being applied to advanced technology such as robotics and computer vision.

Another modern-day application of biomimicry is in artificial intelligence (AI). With AI, machines take on natural cognitive functions such as learning and problem-solving. Artificial neural networks (ANNs) take biomimicry a step further by creating computing systems inspired by the brains of living organisms.

But just how intelligent can a system be that is modeled after a relatively unsophisticated biological brain? It turns out, thanks to evolution, even relatively simple brains of living creatures can be very intelligent when it comes to a task that is necessary for their survival. For a moth, that means the sense of smell.

Sometimes, smaller is better

Even though a moth’s brain is the size of a pinhead, it is highly efficient when it comes to learning new odors. Its sense of smell is needed for finding food and finding mates, both critical tasks for their survival as a species.

Researchers from the University of Washington developed a neural network, dubbed MothNet, based on the structure of a moth’s brain.

“The moth olfactory network is among the simplest biological neural systems that can learn,” the researchers, Charles B. Delahunt, Jeffrey Riffell, and J. Nathan Kutz, stated in their paper, Biological Mechanisms for Learning: A Computational Model of Olfactory Learning in the Manduca sexta Moth, with Applications to Neural Nets.

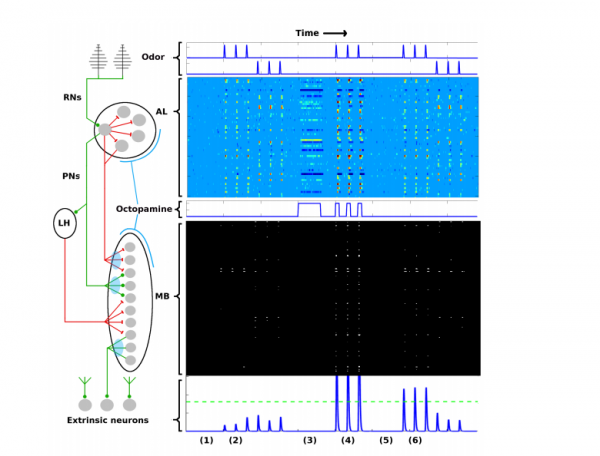

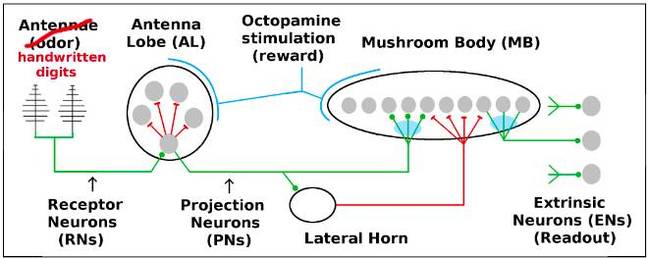

The MIT Technology Review article, Why even a moth’s brain is smarter than AI, described the biological system copied by MothNet, “The olfactory learning system in moths is relatively simple and well mapped by neuroscientists. It consists of five distinct networks that feed information forward from one to the next.”

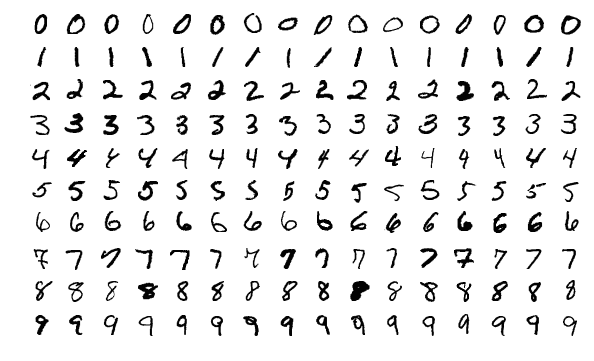

Instead of identifying scents, the researchers used supervised learning to train the ANN to recognize handwritten numbers with just 15 to 20 images of each digit from zero to nine. The training samples came from the MNIST (Modified National Institute of Standards and Technology) database of digits commonly used for training and testing in the field of machine learning. Some Examples from the MNIST database are shown below:

They found MothNet could learn much faster than machines. MothNet “learned” to recognize numbers with just a few training samples with an accuracy of 75 to 85 percent. A typical convolutional neural network, by comparison, requires thousands of training examples to achieve 99% accuracy.

Developing Better Machine Learning Algorithms

The researchers found that the moth’s biological system was efficient at learning due to three main characteristics which could aid in the development of new machine learning algorithms:

- First, it learned quickly by filtering information at each step and only passing along the most critical information to the next phase is the system. While the first of the five distinct networks starts with nearly 30,000 receptors in the antenna, the second network is comprised of 4,000 cells. By the time the information reaches the last network in the system, the neurons number in the 10s.

- Second, the filtering process had the added benefit of removing noise from the signals. The sparse layer between the first two networks acts as an effective noise filter, protecting the downstream neurons from the noisy signal received by the “antennas”.

- Lastly, the brain “rewarded” successfully identifying odors with a release of a chemical neurotransmitter called Octopamine, reinforcing the successful connections in the neural wiring. The active connections strengthen for an assigned digit, the rest wither away.

The green lines highlight the pathways in MothNet, the artificial neural network and the blue lines are the biological pathways. Image credit: Delahunt and Kutz.

“The results demonstrate that even very simple biological architectures hold novel and effective algorithmic tools applicable to [machine learning] tasks, in particular, tasks constrained by few training samples or the need to add new classes without full retraining,” the researchers stated in the paper.

All coding was done in MATLAB. The code for this paper can be found here.

Comments

To leave a comment, please click here to sign in to your MathWorks Account or create a new one.