Mind-controlled robot that works without brain implants

Mind-controlled robots have long been featured in science fiction, but thanks to new research in brain-computer interfaces (BCIs), they are no longer fiction.

BCIs are enabling thought-controlled external devices. BCIs are communication pathways between the brain and an external device that translate a person’s brain activity into a signal that controls the external device, such as a robot.

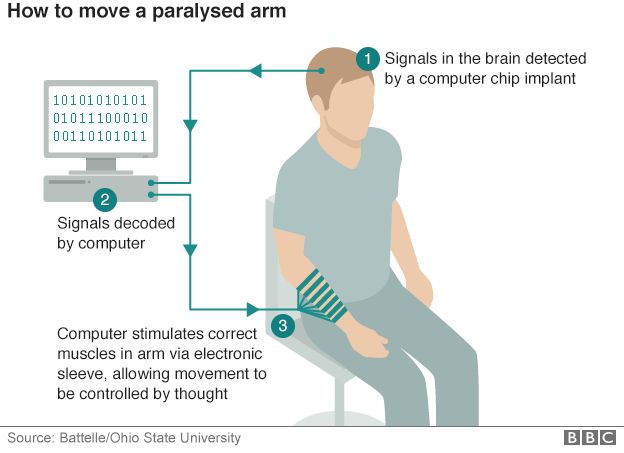

Researchers have been working on BCIs for quite some time, and they’ve delivered impressive results. In 2016, Battelle demonstrated the NeuroLife device which used machine learning, a sleeve of electrodes to stimulate selective muscles in the arm, and a chip implanted in the brain to enable a paralyzed man move his own arm. In 2014, researchers from Johns Hopkins Applied Physics Laboratory created mind-controlled robotic prosthetics for a double amputee. In this case, a targeted muscle innervation surgery reassigned nerves that once controlled the patient’s arm and hand, enabling the nerves to control the prosthetic devices.

These breakthroughs have the potential to greatly improve the lives of paralysis patients and amputees. But these systems are specialized for each patient and the procedures are highly-invasive. What both of these amazing feats of engineering and science have in common is that they required the patient to undergo surgery. Every surgery has associated risks and is potentially dangerous to the patient.

Steps are also being taken to reduce the surgical intervention required. Elon Musk’s company, Neuralink, is working on a less-invasive BCI which replaces chips in the brain with “threads”. He shared some of the details behind the proposed system, including a sewing-machine like a robot that would insert the threads into the patient’s brain.

For now, surgery would still be required. According to The New York Times, “The company says surgeons would have to drill holes through the skull to implant the threads. But in the future, they hope to use a laser beam to pierce the skull with a series of tiny holes.”

Neuralink’s system would utilize a small computer behind the ear. The computer would be attached to small wires that extend into the brain. Image credit: Neuralink.

Non-invasive BCI

Non-invasive techniques have been developed, but only provided limited control of the external devices, typically a robot. The systems couldn’t access enough of the brain’s signals to offer reliable operation. Now, researchers from Carnegie Mellon University (CMU) and the University of Minnesota have developed a non-invasive BCI system that smoothly controls a robotic arm in real-time.

According to Design Engineering, “BCIs without brain implants are less complicated and dangerous to install but typically produce sloppier control. The Carnegie Mellon team says the enhanced accuracy and responsiveness of their non-invasive system could hold great promise for paralyzed patients or those with severe motor limitations.”

The team recently published their research in Science Robotics.

Smooth operator!

In order to achieve smooth operation of the robotic arm, the BCI must be able to reliably read (sense) and decode sufficient neural signals from the patient. The CMU team used electroencephalography (EEG) as a sensing method: The study participants wore a cap that monitored their EEG signals.

For the “brain” side of the BCI equation, they trained 33 able-bodied participants with the system for the study. For the “computer” side, the team utilized machine learning to account for differences in each participants’ skill level.

Decoding the EEG signals was key to the study’s success. The team used electrical source imaging (ESI), a technique that uses the electrical proportions and geometry of each participant’s head. For ESI, the MATLAB-based Brainstorm toolbox (available for download here) used the MRI data from each participant along with the EEG electrode locations to improve the neural decoding. The signal processing was then completed with custom MATLAB scripts.

According to the published study, “Dramatic improvements in offline neural decoding have been observed when using ESI compared with traditional sensor techniques.”

MATLAB was also used for the statistical analysis in the study. And the results were impressive! The team’s unique approach to solving this problem not only enhanced BCI learning by nearly 60%, it also enhanced continuous tracking of the computer cursor by over 500%.

“Despite technical challenges using noninvasive signals, we are fully committed to bringing this safe and economic technology to people who can benefit from it,” Bin He, lead researcher for this study and head of the Biomedical Engineering Department at Carnegie Mellon, said. “This work represents an important step in noninvasive brain-computer interfaces, a technology that someday may become a pervasive assistive technology aiding everyone, like smartphones.”

Comments

To leave a comment, please click here to sign in to your MathWorks Account or create a new one.