Computer vision algorithm removes the water from underwater images

Underwater photography is hard to get right. Special filters, artificial lights, and top-of-the-line underwater cameras can help, but there’s still a lot of water between the camera and the object in the photo. We’ve become accustomed to the blue-green tint of underwater photography.

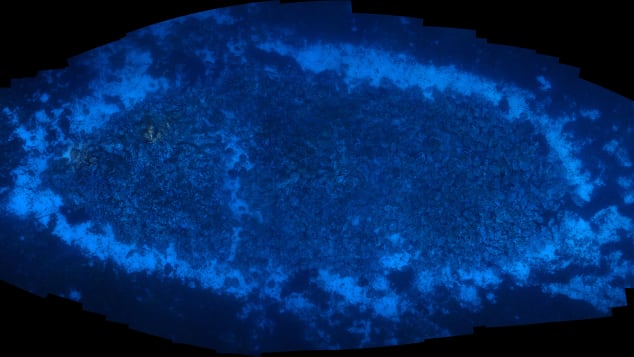

Before: A coral reef in the Red Sea, Israel. Image Credit Matan Yuval, Marine Imaging Lab, University of Haifa

How would the ocean look without water? What are the true colors of a coral reef? Thanks to a new computer vision algorithm called Sea-thru, we can see what an underwater scene would look like if it was photographed through the air instead of water.

After: The same image as above, after processing with Sea-thru algorithm. Image Credit: Matan Yuval, Marine Imaging Lab, University of Haifa

Sea-thru removes the visual distortion that occurs as light travels through the water to produce a color-accurate image. Sea-thru was developed by Dr. Derya Akkaynak, and engineer and oceanographer, and Dr. Tali Treibitz, an electrical engineer. And the results are not only stunning but also a physically accurate correction.

According to Scientific American, “Sea-thru’s image analysis factors in the physics of light absorption and scattering in the atmosphere, compared with that in the ocean, where the particles that light interacts with are much larger. Then the algorithm effectively reverses image distortion from water pixel by pixel, restoring lost colors.”

Akkaynak developed Sea-thru as a post-doctoral fellow at the Marine Imaging Lab, run by Dr. Tali Treibitz, at the University of Haifa. Sea-thru is licensed to SeaErra Ltd.

The reason for the blue-green tint in underwater photos is the way light travels through deep water, where blue and violet wavelengths are absorbed least compared to the other wavelengths. The more water the light travels through, the less red, yellow, and orange wavelengths reach the object. In coastal waters, the blue and green light is absorbed faster, leaving more red light and causing the dominant brown hue.

There’s also an issue of small particles in the water, which create backscatter or haze. The further the photographer is from the object in the photo, the hazier the object becomes, much like looking at an object through a light fog.

How Sea-thru works

Sea-thru works on raw images or videos taken with natural lighting, removing the need for expensive and difficult to set-up artificial underwater lighting. It also helps in reconstructing images of objects that are further away from the light source, where the underwater strobes don’t reach.

The computer vision algorithm is a physics-based color reconstruction algorithm designed for underwater RGB-D images, where D stands for the distance from the camera to the object. The Sea-thru algorithm requires the distance between each pixel in the scene from the camera, as almost all parameters governing loss of colors and contrast depend on distance in some non-linear way.

For her research, Akkaynak created a distance (range) map by capturing multiple images of the same scene. She captured images with a single camera from slightly different angles so that all of them overlap. Included in the images is a color chart placed by the object of interest. Then, using a commercial photogrammetry software called Agisoft Metashape Professional, Sea-thru built a 3D reconstruction of each scene from these images and exported the distance maps once the 3D reconstruction is complete.

The darkest pixels used in the backscatter estimation are shown in red. Full research poster here. Image credit: Akkaynak et.al.

The range maps are grayscale .tiff files, which are then read into MATLAB and combined with the RAW images. The images are processed according to this guide. MATLAB is used to estimate backscatter by searching the image for very dark pixels or shadowed pixels. In the image above, the darkest pixels used in backscatter estimation are shown in red. The backscatter in the images is subtracted with MATLAB.

In the next step, Sea-thru computed the relevant parameters of color attenuation using only the pixels and the depth map D and inverts them to reveal original colors. The attenuation of each wavelength of light is calculated with MATLAB. With this information, Sea-thru inverts the image to reveal the true color.

A coral reef in the Red Sea, Israel. Image Credit: Matan Yuval, Marine Imaging Lab, University of Haifa

Sea-thru works on videos as well as still images. See a side-by-side video from Lake Tanganyika, Zambia below:

Video credit: Alex Jordan

Accurate Images Help Researchers

Climate researchers and oceanographers study underwater ecosystems such as coral reefs to better understand both their current state and how these systems change over time. These studies often rely on imaging and data technology to document and understand the impact of climate change on corals and other marine systems. Sea-thru can help these efforts by providing a color-true representation of the image data. Accurate colors in images will make it easier for machine learning tools to accurately identify species in the images, for example.

The algorithm differs from applications such as Photoshop, with which users can artificially enhance underwater images by uniformly pumping up reds or yellows.

“What I like about this approach is that it’s really about obtaining true colors,” Pim Bongaerts, a coral biologist at the California Academy of Sciences, told Scientific American. “Getting true color could really help us get a lot more worth out of our current data sets.”

A coral reef in the Red Sea, Israel. Image Credit: Matan Yuval, Marine Imaging Lab, University of Haifa

“There are a lot of challenges associated with working underwater that put us well behind what researchers can do above water and on land,” says Nicole Pedersen, a researcher on the 100 Island Challenge, a project at the University of California, San Diego. For this project, scientists take up to 7,000 pictures per 100 square meters to assemble 3-D models of reefs.

“Progress has been hindered by a lack of computer tools for processing these images,” Pedersen told Scientific American. “Sea-thru is a step in the right direction.”

Comments

To leave a comment, please click here to sign in to your MathWorks Account or create a new one.