Integrated Design Projects at Cambridge Computer Science

By Dr Francesco Ciriello, Education Customer Success Engineer based in Cambridge, UK.

Between January and March of 2021, I mentored two student teams from the University of Cambridge on their Part IB Group Design Projects. Each team spent seven weeks developing a project based on an initial brief I outlined. Both teams displayed exceptional skills, creativity and work ethic. With little prior experience using MathWorks software, they were able to deliver complex integration projects that combined MATLAB, Simulink, Simscape Multibody, Unreal Engine 4 and a suite of other toolboxes. Connell and I were eager to get the teams to share their experiences. Here is the story they had to tell us.

Project #1: Consignment Tetris

By Connor Redfern, Marcus Alexander Karmi September, Ana Radu, Viktor Mirjanic, Mohammed Imtiyaz Miah, Joe O’Connor, Department of Computer Science & Technology, University of Cambridge, Cambridge, UK [Winner of Most Impressive Technical Achievement]

3D printing is cool, right? With a 3D printer and some know-how, you can make anything – people have made art, miniature cities, molecular models and even clothing! However, it can also be expensive, so there are many firms that will do the printing for you, and these firms face a problem: how can they automate their packing process when the things they produce have no standard shape or size? This is where our project comes in, a robot which determines an efficient way to pack items into a box for shipping.

Building a MATLAB Robot

An autonomous system can be roughly divided into four subsystems, which operate in a loop: sensing (taking input), planning (deciding what to do), controlling (figuring out how to do your plan) and actuating (enacting the plan). Our simulated Robot has similar subsystems: an image processor which scans the environment and outputs position and size of objects on the conveyor, a process optimizer that figures out where the objects should be placed, and a planning and control module that handles robot movement. Of course, we also had to set up the environment the robot would be working in – conveyors, shipping boxes, items and the robot itself.

Diagram representation of data flow in our project

Delta Robot

A delta robot is a relatively common robot design used for “pick and place” operations: picking something up and placing it elsewhere. This, combined with its relative simplicity, made it a good candidate for our project. The design itself consists of a base, three arms and a head:

The base has three motors, which enable the arms to move independently. The design ensures that the “head” is always parallel to the base, so moving the arms will only move the head in 3D space; no rotation occurs.

Our robot is equipped with a small vacuum on the head, applying a suction force to nearby objects – this is what is used to pick up/put down objects. In a real-world scenario, the best head to use would be determined by the material and maximum dimensions of the prints, but a vacuum makes for a nice stand-in.

While not directly attached to the delta robot, our system also incorporates two conveyor belts, which are controlled by the same system as the robot. One of the conveyors is an input conveyor, where items to be packed arrive, and the other holds boxes that the robot then packs.

Image Processor

There are a variety of sensors in a real-world environment, and a variety of ways for simulating them in Simulink. We went with a relatively simplistic approach: making use of Simscape Multibody Transform Sensors. Transform Sensors can be used to measure a combination of 3D position and rotation between reference frames. PS-Simulink blocks can be used to convert sensor outputs into signals. In the figure below, you can see the transform sensors for the important objects in the setup – these dataflows are then marshalled by MATLAB function blocks before being fed into other subsystems, such as the control module & process optimiser.

Process Optimizer

The process optimiser subsystem takes in the coordinates of all the objects currently in the packing box, together with the dimensions of their cuboid bounding boxes. It then needs to output a position where the item can fit while also considering where future items might be placed. To do this, we must solve two problems: How do we know what spaces of the packing box are empty? And in which of these empty spaces is it optimal to place the item?

By projecting a cross section of the items in the packing box, we get a 2D shadow of the occupied space at a particular height. We compute the empty space in this 2D plane, which is a more manageable problem compared to the 3D variant. By doing this for cross sections at several different heights in the packing box, we get a 3D image of the empty space in the entire packing box.

This results in a set of empty spaces in the delivery box, and we place the item at the lowest height possible. If there are two different candidate positions at the same height, we try to place the item as close to the corner as possible. Researchers have found this to be a good heuristic, so we expect this method to fit as many items as possible into the delivery box.

Planning and Control

The planning and control module is the heart of the autonomous system. It takes sensory input from the image processor, containing information about the next item to be packed, and a desired end position for the item, which is sent by the process optimiser. The planning and control module has, as the name implies, two phases: planning and control. When planning, it needs to produce a path – a sequence of positions from its current location to its desired location. Once a path has been calculated, it then moves into the control phase – calculating the precise torques (forces) the motors need to apply to move the arms smoothly along the path.

Challenges

One of the key issues we were faced with throughout the project was simulation speed. The main culprits were contact forces between items and the shipping box. These forces had undamped micro-oscillations which drastically slowed down our simulation. Sometimes we were running it over 500x slower than real-time. The environment had to go through multiple revisions until the speed became manageable. This problem impacted the control subsystem as well. In order to compensate for slow simulation, we increased the step size limit of our solver. This really did speed things up, but now our delta robot was numerically unstable! Watching it chaotically dance around was mesmerizing, but we had to do something about it. Eventually, we found just the right path along which the robot movement is stable. But, if it were to move just a few centimeters higher, everything would break.

Conclusion

There is no need to waste time figuring out how to pack your 14 newly 3D printed dinosaurs. We have a system that figures this out, as well as doing it for you using a robotic arm! We enjoyed developing this system and learned a lot, both about autonomous systems and about tools like MATLAB, Simulink and Simscape that helped us realize such a system. Our code is available on GitHub and can be run on your own computer.

Project #2: West Cambridge Air Freight

By Naunidh Dua, Edward Weatherley, Tom Patterson, Tobi Adelena, Antonia Boca and Nikola Georgiev, Computer Science Department, University of Cambridge, Cambridge, UK (Runner up Most Impressive Professional Achievement)

Problem description

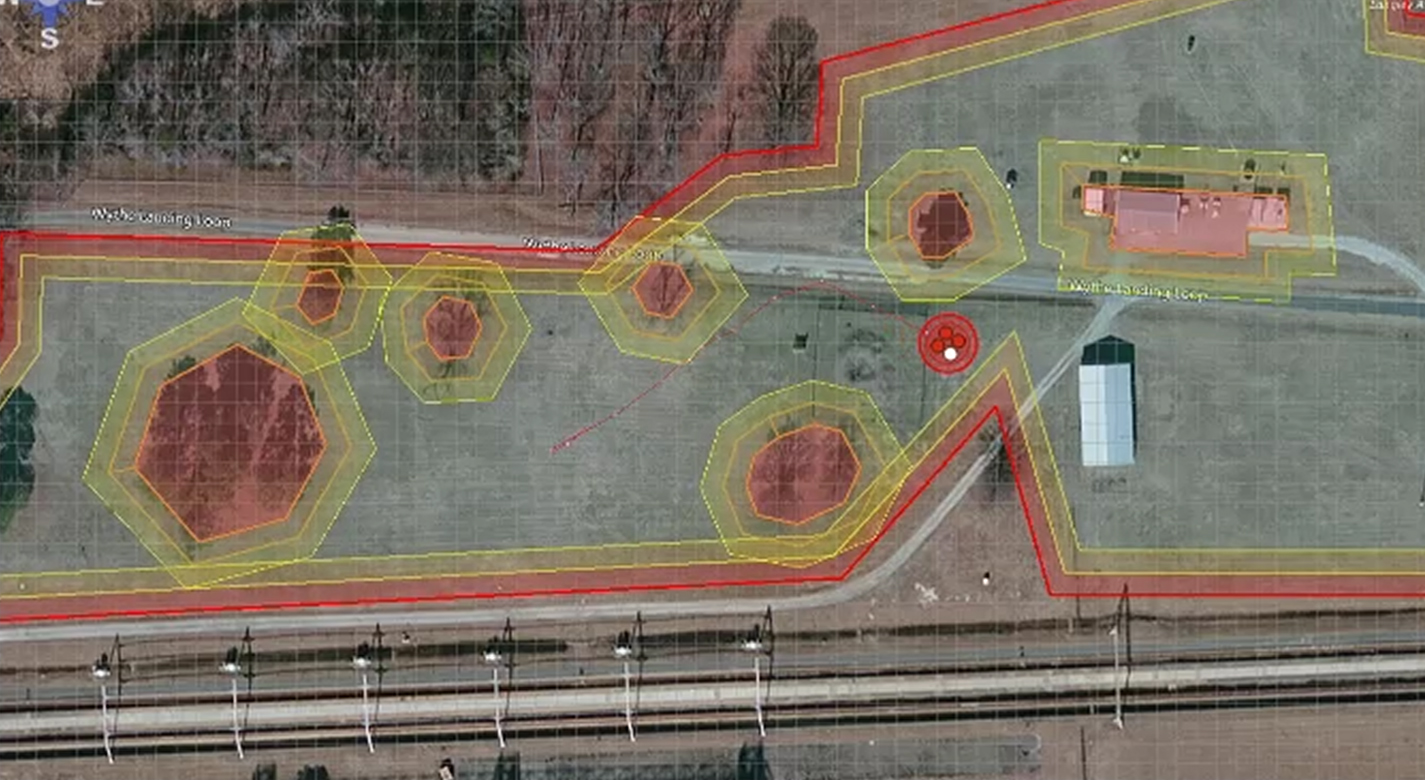

We were tasked with the development of an autonomous drone delivery system for light-weight package transportation around the West Cambridge site.

Implementation

We are presenting the project as an adaptation to an existing MathWorks example project, [UAV Package Delivery]. This project initially supported a drone flying according to specific instructions given as waypoints in QGroundControl, as well as obstacle avoidance on a path between two points. It was set in a photorealistic US City Block model taken from Chicago.

This system involves several components:

- A user interface allowing the specification of pickup and drop-off locations

- A 3D model of the West Cambridge site

- A routing algorithm calculating an efficient path to follow between the buildings

- A scheduling algorithm to decide the order in which delivery missions occur

- A MAVLink interface through which the ground control module could communicate with the drone

User Interface

We used MATLAB’s App Designer to create the new ground control UI necessary to input new missions for the drone. We found MATLAB’s geoaxes were ideal for plotting a map of West Cambridge, the drone and the different routes it follows. Our user interface is equipped with a set of named locations to choose from for pickup and delivery. It also allows users to specify their own locations in lat/long coordinates, which can be read off the axes.

As the main user-facing layer, the UI has to correctly take the user’s input and communicate it with the other components. We created callback functions for when the drone lands as well as updating the drone’s position in the UI which are triggered by logic in a StateFlow block in Simulink. A wrapper for the C++ scheduling algorithm (compiled into a MEX) enables scheduling from the UI and caching of mission points and data. With the received scheduled deliveries, intermediate missions are added to fly the drone between deliveries, then the UI sends an array of coordinates using a MAVLink interface.

West Cambridge Model

The Model for West Cambridge was made in Blender using a GIS data plugin to import a ground texture, contours and basic building models. We then added some shaders and baked the building textures onto a single uv-mapped image that could be exported to Unreal. The Unreal project could then be packaged and inserted into the scenario block within the existing Simulink model, for use in the simulation.

Routing Algorithm

We chose to use the RRT* algorithm to calculate the routes between buildings. We could have instructed the drone to go straight over the buildings, however showing that we could direct the drone around them in this way provides a proof of concept for the drone navigating a more urban environment, with much taller buildings. Our reasoning behind choosing RRT* is that the A* algorithm takes far too long to calculate a route for a delivery due to it producing an absolutely optimal route. This makes the drone seem unresponsive as it takes a long pause before running a mission. On the other hand, RRT* provides a much more rapid calculation and doesn’t increase the distance the drone needs to travel significantly enough to lose the time gained from quick computation.

We also needed to provide an occupancy map for the routing algorithm to work in, indicating where the buildings are. This was obtained using the Python API in Blender, allowing us to extract the vertices of the buildings, as well as sample the height of the ground at each point using ray-casting.

Scheduling Algorithm

Another important part of the project was designing an effective algorithm for scheduling multiple missions. There are multiple things to take into consideration, such as the battery life of a drone or whether we could design an algorithm for multiple drones.

After some research, we discovered that there exist some interesting libraries that tackle Vehicle Routing Problems which allow developers to add personalized constraints (one example is Google’s OR-Tools library). However, it turned out that integrating these within our MATLAB project was unfeasible, so we focused on finding an algorithm that would output good approximations of efficient routes.

The basic idea of the scheduling algorithm was to use the distances calculated by the routing algorithm mentioned above for each possible pair of pick-up points and delivery points given in the user’s interface. Of course, doing this in every call to the scheduler turns out to be inefficient, so we used caches to save some of these routes in case they are re-used.

Next, the algorithm tries a fixed number of random permutations of the list of missions and chooses the best arrangement. We defined the best arrangement to be the one that minimizes the Euclidean distance calculated from the routes. The number of trials is a constant that can be modified – we set our constant to 100 (given the small scale of the project) but of course this could be tailored for larger sets of missions.

The decision to code this part of the project in C++ was mainly for convenience, and partly for speed. Data structures such as the C++ standard library vectors are easy to use for shuffling the order of missions.

MAVLink interface

Communication between the drone and the ground control station was conducted using the MAVLink protocol. At the start of each mission, the ground control sends a sequence of instructions which contains the route of the drone that was produced by the routing algorithm and assigned to the drone through the scheduling algorithm. When the drone receives these mission instructions, it uses PID controllers to adjust its yaw, pitch and roll in order to move to the desired waypoint.

When the drone lands at its destination, a land signal is sent back to the ground control station which triggers the scheduling algorithm to retrieve the next route and send it to the drone.

Testing

As with any Project, it is necessary to ensure that all the units work, as well as the integration. We used MATLAB’s unit testing features in order to accomplish this. This allowed us to learn that the A* algorithm was too slow and allowed us to stress test the RRT* algorithm such that we could tune the parameters, and gain an increase in the complexity of the routes it could find, as well as the speed increase from memorizing commonly used routes. We similarly tested and stress tested the other parts of the Project too, such as the Scheduling algorithm and the UI.

Why Simulink?

Simulink was a vital tool for our project. It enabled us to make changes to the behaviour of the drone quickly using the provided toolbox blocks. In addition, we could iterate through the design process without worrying about risks that flying a real drone would introduce, such as during tests of our routing algorithm. Simulink also contains a variety of tools that we used to debug our design, such as the Data Inspector.

We used the Data Inspector to analyse the wire signals within the drone to ensure that the drone is always in a valid state.

- Category:

- Robotics,

- Team achievements

Comments

To leave a comment, please click here to sign in to your MathWorks Account or create a new one.