Numerical Analyst John D’Errico Takes a Stroll Through Cody

I think of the wise and generous John D’Errico as a computational philanthropist. After a long career as a numerical analyst at Kodak, he retired to what could have been complacent indifference to the outside world. But that’s not the kind of man that John D’Errico is. He wanted to volunteer his time to make other people more skillful in their computational careers. We here at MATLAB Central are lucky to have a man of his talents and motivations as a resident scholar and coach. After playing around with Cody recently, John sent us this note, which, with his permission, I am happy to publish here.

It’s fun to follow along behind an expert, because even with a seemingly simple problem like summing consecutive integers, there’s always more going on than you first realize. And there’s always a new approach to the problem that you would have never considered.

Thanks John!

-Ned.

Some Thoughts on Cody

by John D’Errico

As an occasional Project Euler solver who has solved several hundred problems (all but one of them in MATLAB, the odd one out I did by hand) Cody intrigues me. So I spent a little time with Cody yesterday. Here are my thoughts, that perhaps you might want to pass along.

Really, mainly I looked at one problem, a rather arbitrary one from the top of the stack. I picked problem 189 to look at. The question was to compute the sum of the integers from 1 to 2^n.

Clearly the simple solution, in fact, one might argue the classic MATLAB solution is simply

y = sum(1:2^n);

Of course this is fast to compute, easy to read, and very obvious what the code does. This is the solution I would jump to almost always, EXCEPT when n is large. For example, what happens if n is 25? 100? The classic solution above will fail to return a result for n=100 before the universe dies from heat death, as 2^100 is such a huge number that any modern computer will never terminate the computation. And simply trying to generate a vector of length 2^100 will exceed the RAM in any computer we could ever imagine. So the classic solution fails miserably, for SOME n. On my machine, I get the following times:

>> timeit(@() sum(1:2^5)) ans = 1.2278e-06 >> timeit(@() sum(1:2^10)) ans = 4.911e-06 >> timeit(@() sum(1:2^15)) ans = 7.7062e-05 >> timeit(@() sum(1:2^20)) ans = 0.002765 >> timeit(@() sum(1:2^25)) ans = 0.1736

As you can see, when n starts to be large enough that the vectors are significantly large, the time also becomes significant, and does so quickly. I would not bother to try it for n=30. The point is that the classic solution fails because it uses an algorithm that is O(2^n). Of course algorithms that suffer from such exponential growth are problematic. This one is fine as long as n is never larger than around 15-20. But exponential run time hits a computational brick wall very quickly.

The solution might be to choose to look more closely at the problem. Is there a mathematical way to reduce the time needed? Clearly, the necessary sum is easy to compute IF one recognizes that for any positive integer n, one can write the integers in the set [1:2^n] as:

[1:2^(n-1) , 2^(n-1) + 1:2^(n-1)]

This merely reflects the binary expansion of these numbers. However it also gives us a nice way to write the desired sum in a recursive fashion. That is, we can write the solution as:

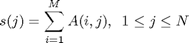

sum_int(n) = sum_int(n-1) + (2^(n-1))^2

So a better code, at least for purely non-negative integer n, might be something like this:

function y = sum_int(x) % For non-negative integer input x, compute the sum of the integers 1:2^x if x == 0 % special case to end the recursion y = 1; else y = 2*sum_int(x - 1) + 2^(2*x-2); end

So we see that sum_int as written above, is actually faster to run, even for n as small as 5, and that the time seems to be linear in n. As one should recognize, sum_int is indeed an O(n) algorithm as written above.

>> timeit(@() sum_int(5)) ans = 7.5692e-07 >> timeit(@() sum_int(10)) ans = 1.7227e-06 >> timeit(@() sum_int(15)) ans = 2.6715e-06 >> timeit(@() sum_int(20)) ans = 3.7462e-06 >> timeit(@() sum_int(25)) ans = 4.6515e-06 >> timeit(@() sum_int(100)) ans = 1.772e-05

In fact, one can even use sum_int to compute the exact value for sym input. Of course sym slows it down, but even that takes only 1/2 second to compute.

>> timeit(@() sum_int(sym(100))) ans = 0.54818 >> sum_int(sym(100)) ans = 803469022129495137770981046171215126561215611592144769253376 >> sum_int(sym(1000)) ans = 57406534763712726211641660058884099201115885104434760023882136841288313069618515692832974315825313495922298231949373138672355948043152766571296567808332659269564994572656140000344389574120022435714463495031743122390807731823194181973658513020233176985452498279081199404472314802811655824768082110985171698215120385828833994926435042413009836474525915918251720110295164670516628746730606637774712420949241272862285974797636825655630482650144583914557794004353013244164891007287546556882572164179254268468766736029354798734808092593495610026430676423398598185956607671495251600324809039074511028408549376

An interesting question is if n should be an integer. Should the code produce an error when n is not integer? Or should it merely produce a result for any non-negative floating point number? My point is that error checking is an important part of good code, as is good documentation that indicates the behavior of the code under all circumstances. So sum_int as written will fail when n is 2.5, or pi, or any non-integer.

Next, a serious problem with SOME recursive schemes is they can suffer from exponential growth themselves. E.g., using recursion to compute the n’th Fibonacci number is a classically poor way to solve the problem. A simple loop (thus O(n)) suffices for reasonably small Fibonacci numbers, although for huge n, there are better schemes to compute Fibonacci numbers that are only O(log2(n)) in complexity. Regardless, one can re-write the function sum_int with a for loop even though the recursive nature of the code is not truly an issue for this problem.

function y = sum_int2(x) % For non-negative integer input x, compute the sum of the integers 1:2^x y = 1; for k = 1:x y = 2*y + 2^(2*k-2); end

This simpler, non-recursive code is as easy to read as the recursive code, and it will run more quickly yet, since it does not suffer from recursive function call overhead. However, it will not produce correct results for sym input. One could fix that problem too, with some effort.

Okay, is this the end of such a discussion? Of course not. We can return to the famous solution by Gauss, that recognizes the natural symmetry in the problem. Thus if we write the sum of the integers

0,1,2, … 2^n-2, 2^n-1, 2^n

then we can compress the sum down by adding them in a different order.

(0 + 2^n) + (1 + 2^n-1) + (2 + 2^n-2) + …

So, the famous formula (attributed to Gauss) for the sum of the integers from 0 to k, can be written as

k*(k+1)/2

Therefore we can write a simpler version yet of this code:

sum_int3 = @(n) 2^n*(2^n+1)/2;

This works because the sum of the integers 0:k is the same as the sum of the integers 1:k. Of course, it produces the correct result, and it is quite fast. As well, it works for any class of input that supports the necessary arithmetic operations.

>> sum_int3(sym(100)) ans = 803469022129495137770981046171215126561215611592144769253376 >> timeit(@() sum_int3(sym(100))) ans = 0.0031585

So any in-depth discussion of the problem should explain issues like this. I’d argue that virtually every problem in Cody would/could benefit from a discussion like this, where the reader can learn how to recognize when a simple algorithm that does quite well for some inputs, may still fail miserably for other input.

Should Cody have some mechanism for expert solvers to explain issues like this? I guess that is up to you to decide, but I would argue that an earnest student could learn a great deal from such a discussion.

In summation, I realize the lessons in my comments are fairly classic:

When writing code, your goal at first should be to find a solution that is at least viable. Get it working FIRST. If there is a problem with time or memory, etc., only then do you consider optimizing your code. Programmers (me included) can often spend far too much time optimizing code that has no need for that.

Programmer time is generally far more valuable than cpu time. So avoid pre-optimization of your code.

When you do look for a code optimization, there are many ways to solve any problem. Sometimes a vectorized solution will be right. Sometimes a looped solution is better. And sometimes it is right to look for mathematical insight to find a completely different solution.

John

- Category:

- Cody

Comments

To leave a comment, please click here to sign in to your MathWorks Account or create a new one.