All about pixel colors: Scaled indexed images

Note

See the following posts for new or updated information about this topic:

In my last post on pixel colors, I described the truecolor and indexed image display models in MATLAB, and I promised to discuss an important variation on the indexed image model. That variation is the scaled indexed image, and the relevant MATLAB image display function is imagesc.

Suppose, for example, we use a small magic square:

A = magic(5)

A =

17 24 1 8 15

23 5 7 14 16

4 6 13 20 22

10 12 19 21 3

11 18 25 2 9

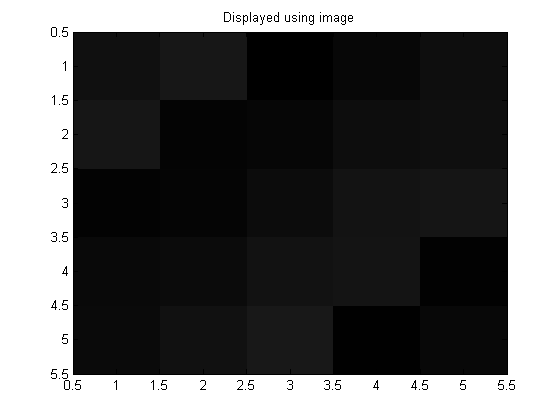

Now let's display A using image and a 256-color grayscale colormap:

map = gray(256);

image(A)

colormap(map)

title('Displayed using image')

The displayed image is very dark. That's because the element values of A vary between 1 and 25, so only the first 25 entries of the colormap are being used. All these values are dark.

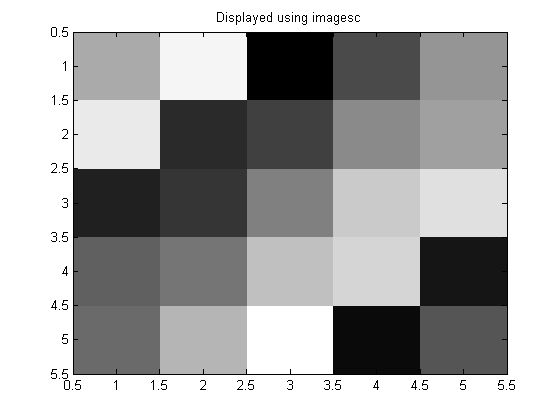

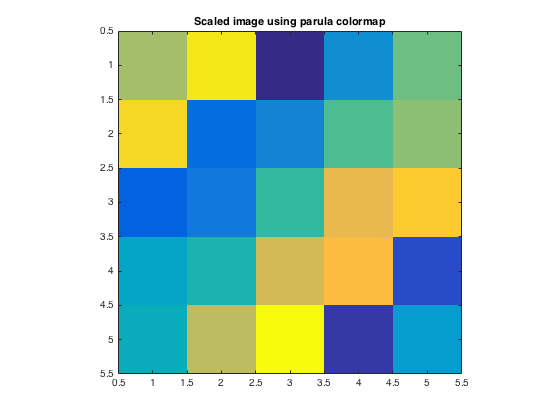

Compare that with using imagesc:

imagesc(A)

colormap(map)

title('Displayed using imagesc')

Here's what's going on. The lowest value of A, which is 1, is displayed using the first colormap color, which is black. The highest value of A, which is 25, is displayed using the last colormap color, which is white. All the other values between 1 and 25 are mapped linearly onto the colormap. For example, the value 12 is displayed using the 118th colormap color, which is an intermediate shade of gray.

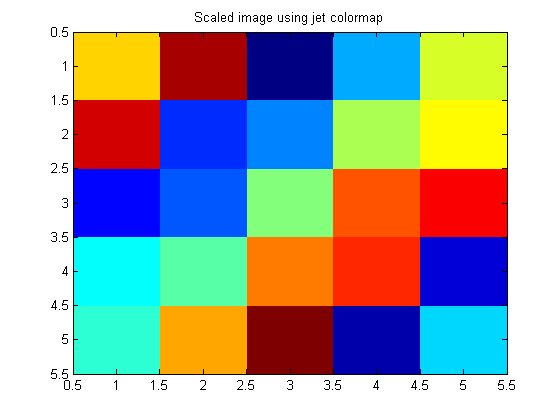

You can switch colormaps on the fly, and the values of A will be mapped onto the new colormap.

colormap(jet)

title('Scaled image using jet colormap')

Let's dig into the low-level Handle Graphics properties that are controlling these behaviors. Image objects have a property called CDataMapping.

close all h = image(A); get(h, 'CDataMapping') close

ans = direct

You can see that its default value is 'direct'. This means that values of A are used directly as indices into the colormap. Compare that with using imagesc:

h = imagesc(A);

get(h, 'CDataMapping')

closeans = scaled

The imagesc function creates an image whose CDataMapping property is 'scaled'. Values of A are scaled to form indices into the colormap. The specific formula is:

C is a value in A, and c_min and c_max come from the CLim property of the axes object.

h = imagesc(A);

get(gca, 'CLim')

close

ans =

1 25

It's not a coincidence that the CLim (color limits) vector contains the minimum and maximum values of A. The imagesc function does that by default. But you can also specify your own color limits using an optional second argument to imagesc:

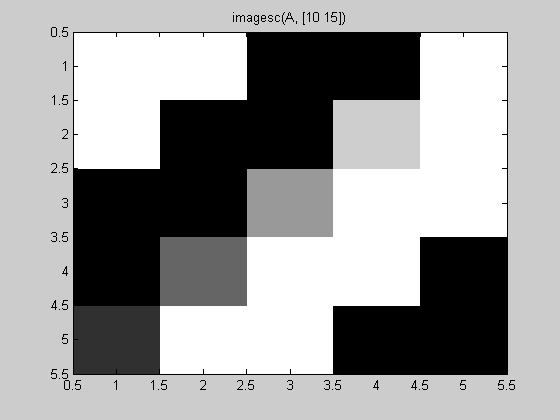

imagesc(A, [10 15])

colormap(gray)

title('imagesc(A, [10 15])')

10 (and values below 10) were displayed as black. 15 (and values above 15 were displayed as white. Values between 10 and 15 were displayed as shades of gray.

Scaled image display is very important to engineering and scientific applications of image processing, because often what we are looking at it isn't a "picture" in the ordinary sense. Instead, it's an array containing measurements in some sort of physical unit that's not related to light intensity. For example, I showed this image in my first "All about pixel colors" post:

This is a scaled-image display of a matrix containing terrain elevations in meters.

Cleve’s Corner: Cleve Moler on Mathematics and Computing

Cleve’s Corner: Cleve Moler on Mathematics and Computing The MATLAB Blog

The MATLAB Blog Guy on Simulink

Guy on Simulink MATLAB Community

MATLAB Community Artificial Intelligence

Artificial Intelligence Developer Zone

Developer Zone Stuart’s MATLAB Videos

Stuart’s MATLAB Videos Behind the Headlines

Behind the Headlines File Exchange Pick of the Week

File Exchange Pick of the Week Hans on IoT

Hans on IoT Student Lounge

Student Lounge MATLAB ユーザーコミュニティー

MATLAB ユーザーコミュニティー Startups, Accelerators, & Entrepreneurs

Startups, Accelerators, & Entrepreneurs Autonomous Systems

Autonomous Systems Quantitative Finance

Quantitative Finance MATLAB Graphics and App Building

MATLAB Graphics and App Building

Comments

To leave a comment, please click here to sign in to your MathWorks Account or create a new one.