Trip Report: SuperComputing 2015

SC15, the International Conference for High Performance Computing, Networking, Storage and Analysis, was held in Austin, Texas, last week, November 15 through 20. This is the largest trade show and conference that MathWorks participates in each year.

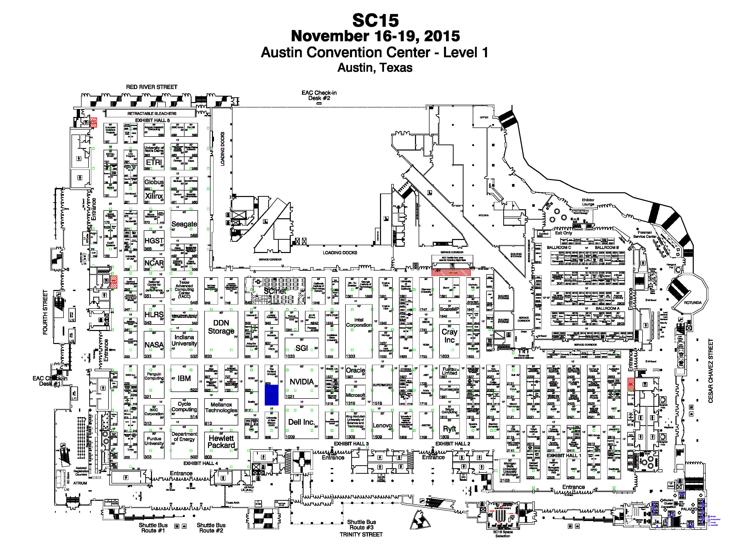

The blue rectangle is the location of the MathWorks booth.

Contents

Supercomputing Conferences

SC15 was the 27th annual SC conference. The first one was in Orlando in 1988. There were only a couple of hundred attendees. I went to the first two SC's because I worked for an ill-fated computer company named Ardent for a few years in the late 1980's. When Ardent failed I joined MathWorks full time and stopped going to SC because MathWorks was not in the supercomputer business.

I started going to SC's again eleven years ago when MathWorks announced the Parallel Computing Toolbox at SC04 and we began our annual participation. By then SC's had grown to be huge events. This year there were over 12,900 attendees, setting a record.

The conference combines a large trade show and a professional meeting with technical presentations, awards, tutorials and workshops. Here is a link to the conference web site. I don't know how long this link will continue to exist after the conference.

The MathWorks Booth

There were 352 exhibitors at the trade show. MathWorks had a 20x30 foot spot near the center of the exhibit hall. I have colored it blue in the map above. We were surrounded by Nvidia, Dell, University of New Mexico, Scality, San Diego Supercomputer Center, and The Portland Group. This gives you an idea of the variety of exhibitors. At 50x50 feet, Nvidia's booth was one of the largest. Here is a photo of our booth.

(Photo by Robin Nelson, MathWorks)

Sixteen MathWorkers attended the conference. Almost half of them were from our Cambridge, UK, office where most of the development of our high performance computing tools is done. Five of the attendees had never been to a trade show before. SC15 provided a formidable introduction.

Even though it was not supercomputing, one of our demos that drew lots of attention involved a Raspberry Pi doing live video edge detection.

Alan Alda

One of the highlights for me was the keynote address by Alan Alda. Alda first came to public attention for his role as Hawkeye Pierce in the hit TV series M*A*S*H that originally ran for eleven years in 1972-83 and then ran forever in reruns. He later had another eleven year stint as host of the TV series Scientific American Frontiers. He is now a visiting professor at and founding member of the Center for Communicating Science at Stony Brook University.

Alda's talk was about how scientists and engineers can better communicate about the work they are doing with the public at large, and with each other. He was engaging, funny, and inspiring.

Gordon Bell Prize

One of the most interesting technical talks that I heard was by Omar Ghattas, a professor in Geological Sciences at the University of Texas. The work is described in a paper available here by Ghattas and nine other authors, most of them his current and former grad students. The title of the paper is "An extreme-scale implicit solver for complex PDEs: highly heterogeneous flow in earth's mantle." Later in the conference it was announced that the work had won the prestigious Gordon Bell Prize.

Convection of the earth's mantle drives plate tectonics and continental drift and, in turn, controls earthquakes and volcanoes. Their model is a partial differential equation with a nonlinear viscosity term that varies over six orders of magnitude. The geometry is the mantle of the entire earth. A resolution of 0.5km is required in some regions, so a highly adaptive mesh is necessary. The simulation involves 600 billion nonlinear equations.

Computations were carried out on Sequoia, an IBM BlueGene/Q located at Lawrence Livermore National Laboratory. The machine has 96 racks, each with 1,024 nodes hosting 16 core POWER processor chips. That's a total of over 1.5 million cores. Quoting the abstract of their paper:

These features present enormous challenges for extreme scalability. We demonstrate that contrary to conventional wisdom algorithmically optimal implicit solvers can be designed that scale out to 1.5 million cores for severely nonlinear, ill-conditioned, heterogeneous, and anisotropic PDEs.

TOP 500

Twice a year, in June at ISC in Germany and in November at SCxx in the USA, the TOP500 organization announces the world's fastest supercomputers. The ranking is based on the LINPACK benchmark. The TOP500 web site lists the authors as:

- Erich Strohmaier, NERSC/Lawrence Berkeley National Laboratory

- Martin Meuer, Prometeus

- Jack Dongarra, University of Tennessee

- Horst Simon, NERSC/Lawrence Berkeley National Laboratory

Once again the list presented at SC15 did not change very much from earlier lists. The top five remained unchanged. For three years now the fastest computer in the world, according to this ranking, has been the Tianhe-2 in Guangzhou, China. This machine has over three million cores and a LINPACK rating of 33.8 petaflops.

Everybody agrees that the LINPACK benchmark is not representative of the kind of computations done in high performance computing today. But it is something to keep track of for its own intrinsic historic value. Strohmaier does a good job of this with the analysis he presents at the conferences and on the TOP500 web site.

- Category:

- History,

- People,

- Performance,

- Supercomputing,

- Travel

Comments

To leave a comment, please click here to sign in to your MathWorks Account or create a new one.