A Glimpse into Floating-Point Accuracy

There are frequent posts on the MATLAB newsgroup as well as lots of questions posed to Technical Support about the floating point accuracy of MATLAB. Many people think they have found a bug when some seemingly simple arithmetic doesn't give the intuitive answer. The issue that arises has to do with the finite number of bits that a computational device uses to store and operate on values.

Contents

Calculator Demo

Have you ever impatiently waited for an elevator and continued pushing the UP button in the hopes that the elevator will arrive faster? If so, you may also have impatiently pushed buttons repetitively on a calculator (assuming you know what a calculator is!). It's not just MATLAB that computes values in a way that seems to defy our expectations sometimes.

clear

format longHere's what I tried. I put the number 7 into my calculator. I think it calculates in single precision, but I'm not sure. It shows 7 digits after the decimal point when I take the square root, and doing it several times, I get

sqrts7inSingle = single([7 2.6457513 1.6265765 1.275373])

sqrts7inSingle = 7.0000000 2.6457512 1.6265765 1.2753730

Next, I take the final number and square it 3 times, yielding

squaringSqrt7inSingle = single([1.275373 1.6265762 2.6457501 6.99999935])

squaringSqrt7inSingle = 1.2753730 1.6265762 2.6457500 6.9999995

Compare the difference between the original and final values.

sqrts7inSingle(1)-squaringSqrt7inSingle(end)

ans = 4.7683716e-007

This number turns out to be very interesting in this context. Compare it to the distance between a single precision value of 7 and its next closest value, also know as the floating-point relative accuracy.

eps(single(7))

ans = 4.7683716e-007

We get the same value! So, we can demonstrate the effects of finite storage size for values on a handheld calculator. Let's try the equivalent in MATLAB now.

Accuracy in MATLAB

Let's do a similar experiment in MATLAB using single precision so we have a similar situation to the one when I used the calculator. In this case, I am going to keep taking the square root until the value reaches 1.

num = single(7); count = 0; tol = eps('single'); dnum = sqrt(num); while abs(dnum-1)>=tol count = count+1; dnum = sqrt(dnum); end count

count =

23

So, it took 23 iterations before we reached the number 1. But now we're in a funny situation. There is no way to square exactly 1 and ever reach our original value of 7.

Using Double Precision

Let's repeat the same experiment from the calculator in MATLAB using double precision.

num = 7; sqrtd = sqrt(num); sqrtd(2) = sqrt(sqrtd(1)); sqrtd(3) = sqrt(sqrtd(2)) prods(1) = sqrtd(end); prods(2) = prods(1)^2; prods(3) = prods(2)^2 finalNum = prods(3)^2

sqrtd = 2.64575131106459 1.62657656169779 1.27537310685845 prods = 1.27537310685845 1.62657656169779 2.64575131106459 finalNum = 7.00000000000001

Find the difference between finalNum and num and compare to the proper relative floating-point accuracy:

diffNum = finalNum-num acc = eps(num)*6 diffNum-acc

diffNum =

5.329070518200751e-015

acc =

5.329070518200751e-015

ans =

0

Why did I use 6 when I calculated acc? Because I performed 6 floating point operations to get the my final answer, 3 sqrt applications, followed by squaring the answers 3 times.

Typical MATLAB Pitfall

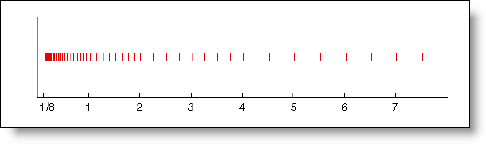

The most common MATLAB pitfall I run across is when users check equality of values generated from the : operator. The code starts innocently enough. I've actually turned the logic around here a bit, so we will print out values in the loop where the loop counter may not be exactly what the user expects.

format short for ind = 0:.1:1; if ind ~= fix(10*ind)/10 disp(ind - fix(10*ind)/10) end end

5.5511e-017 1.1102e-016 1.1102e-016

And then the question is, why do any of the values print out? It's because computers can't represent all numbers exactly given a fixed storage size such as double precision.

Conclusions

This was a very simplified explanation about floating point, but one, I hope, that is easily understood. Let me know.

References

Here are some pointers to more resources.

Published with MATLAB® 7.2

- Category:

- Common Errors

Comments

To leave a comment, please click here to sign in to your MathWorks Account or create a new one.