Text Mining Shakespeare with MATLAB

Have you ever wondered how Google provides the auto-complete feature in Google Suggest? Or sometimes you see results of hilarious or annoying auto-correct features on your smartphone? Today's guest blogger, Toshi Takeuchi, explains a natural language processing approach through a fun text mining example with Shakespeare.

Contents

Predictive Text Game

There is a simple but powerful natural language processing approach called n-gram-based language models which you can have a lot of fun with using MATLAB.

To see how it works, we will create a predictive text game that generates random Shakespearean text automatically. You can also specify the first word to generate a random sentence. Here are a couple of auto generated fake Shakespearan quotes:

didst thou kill my cousin romeo parting is such sweet sorrow that i ask again nurse commend me to your daughter borrow cupid s wings and soar with them o mischief thou art like one of these accidents love is a most sharp sauce

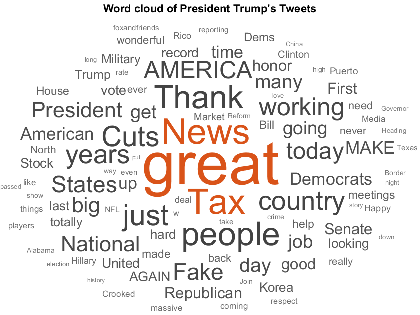

I happen to use Romeo and Juliet from Project Gutenberg for this example, but you can use any collection of text data. I almost thought about using comedian Amy Schumer quotes. If you have a collection of your own writing, such as emails, SMS and such, this can generate text that sounds like you (check out this XKCD cartoon). If you have collection of pirate talks, you can talk like them. That's going to be fun.

N-grams

Let's start with the basics. An N-gram is a sequence of words that appear together in a sentence. Commonly word tokens are used, and they are unigrams. You can also use a pair of words, and that's a bigram. Trigrams use three words... all the way to N-grams for N words. Let's try using this ngrams function.

ngrams('a b c d e', 1) % unigrams ngrams('a b c d e', 2) % bigrams ngrams('a b c d e', 3) % trigrams

ans =

'a' 'b' 'c' 'd' 'e'

ans =

'a b' 'b c' 'c d' 'd e'

ans =

'a b c' 'b c d' 'c d e'

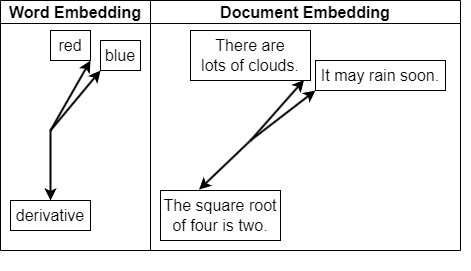

Language Model

N-grams are used to predict a sequence of words in a sentence based on chained conditional probabilities. These probabilities are estimated by mining a collection of text known as a corpus; we will use 'Romeo and Juliet' as our corpus. Language models are made up of such word sequence probabilities.

Here is a bigram-based example of how you would compute such a probability.

P(word2|word1) = c('word1 word2')/c(word1)P(word2|word1) is a conditional probability of word2 following word1, and you compute it by dividing the count of the igram 'word1 word2' by the count of word1. Here is an example for trigrams.

P(word3|'word1 word2') = c('word1 word2 word3')/c('word1 word2')Word sequences are not always determined by the previous words. This is a very simplistic approach (known as a Markov model). However, it is easy to model and works reasonably well. Wikipedia provides an example of how this can be useful in resolving ambiguity in speech recognition applications, where the phrases "recognize speech" and "wreck a nice beach" are pronounced almost the same in American English but mean very different things. You can probably guess that "recognize speech" would have a higher probability than "wreck a nice beach". A speech recognition application would adopt the higher probability option as the answer.

Reading and Preprocessing Shakespeare

The Project Gutenberg text file is in a plain vanilla ASCII file format with LFCR line breaks. It comes with a lot of extra header and footer text that we want to remove. I am assuming you have downloaded the text file to your current folder.

romeo = fileread('pg1513.txt'); % read file content romeo(1:13303) = []; % remove extra header text romeo(end-144:end) = []; % remove extra footer text disp(romeo(662:866)) % preview the text

ACT I. Scene I. A public place. [Enter Sampson and Gregory armed with swords and bucklers.] Sampson. Gregory, o' my word, we'll not carry coals. Gregory. No, for then we should be colliers.

You need to remove non-dialogue text, such as stage directions. You also need to add sentence markers at the beginning and end of each, such as <s> and </s>. We will use sentences with at least 3 words. This procedure is handled in the preprocess function.

processed = preprocess(romeo); % preprocess text disp([processed{6} char(10) processed{7}]) % preview the result processed = lower(processed); % lowercase text

<s> Gregory, o' my word, we'll not carry coals. </s> <s> No, for then we should be colliers. </s>

Building a Bigram Language Model

Let's use a simple bigram model with bigramClass to build the first Shakespeare text generator.

delimiters = {' ', '!', '''', ',', '-', '.',... % word boundary characters

':', ';', '?', '\r', '\n', '--', '&'};

biMdl = bigramClass(delimiters); % instantiate the class

biMdl.build(processed); % build the model

Generating bigrams... ......................... Building a bigram model... ................

Here is an example of how you use the bigram model to get the probability of 'thou art'. Rows represents the first word in a bigram, and columns the second.

row = strcmp(biMdl.unigrams, 'thou'); % select row for 'thou' col= strcmp(biMdl.unigrams, 'art'); % select col for 'art' biMdl.mdl(row,col) % probability of 'thou art'

ans =

0.10145

Generating Bigram Shakespeare Text

Using this bigram language model, you can now generate random text that hopefully sounds Shakespearean. This works by first randomly selecting a bigram that starts with <s> based on its probability, and then randomly selecting another bigram based on its probability, starting with the second word in the first bigram, and so forth, until we encounter </s>. This is implemented in the functions textGen and nextWord.

rng(1) % for reproducibility textGen(biMdl) % generate random text

ans =

'blister d further than groaning for me'

'this fatal points and every day that shot through all will consents'

'conceit more most sharp ground of all the wind you and jocund day of t...'

'alas the measure and put to take thou wast the break at leisure serves...'

'cast me and said an alderman drawn among these my master and scorn the...'

Generating Trigram Shakespeare Text

Bigram sentences sound a bit like Shakespeare but they don't make a lot of sense. Would we do better with a trigram model? Let's try it with trigramClass.

triMdl = trigramClass(delimiters); % generate trigrams triMdl.build(processed, biMdl); % build a trigram model rng(2) % for reproducibility textGen(triMdl, 'thou') % start with 'thou'

Generating trigrams...

.........................

Building a trigram model...

......................

ans =

'thou hither tell me good my friend'

'thou canst not teach me how i love'

'thou know st me oft for loving rosaline'

'thou hither tell me how i love thy wit that ornament to shape and love...'

'thou cutt st my lodging'

Create a Smartphone App

If you liked this XKCD cartoon which shows an example of predictive text smartphone app, you may want to create your own. If so, check out this webinar that shows you how to turn MATLAB code into a mobile app through C code generation MATLAB to iPhone and Android Made Easy

Summary

You saw that the trigram model worked better than the bigram model, but William Shakespeare would have had nothing to fear about such models taking over his playwright job. We talked about practical uses like auto-completion, auto-correction, speech recognition, etc. We also discusssed how you could go from MATLAB code to a mobile app using C code generation.

In practical natural language processing applications, such as resolving ambiguity between "recognize speech" vs. "wreck a nice beach" in speech recognition, the model requires further refinements.

- To score a sentence, you use the chain rule to compute a product of a bunch of conditional probabilities. Since they are small numbers, you get even a smaller number by multiplying them, causing arithmetic underflow. We should use log probabilities instead.

- How do you deal with new sequences or a new word not seen in the corpus? We need to use smoothing or backoff to account for unseen data.

To learn what you can do with text in MATLAB, check out this awesome introductory book Text Mining with MATLAB.

For a casual predictive text game just for fun, you can play with the simple models I used in this post. Try out the code examples here, and building your own random text generator from any corpus of your interest. Or try to implement the score method that incorporates the suggested refinements using the code provided here.

If you have an interesting use of language models, please share in the comments here.

Comments

To leave a comment, please click here to sign in to your MathWorks Account or create a new one.