Computational Geometry in MATLAB R2009a, Part I

I'm pleased to introduce Damian Sheehy as this week's guest blogger. Damian is a geometry developer at The MathWorks, his background is in the area of geometric modeling and mesh generation.

Contents

A Creature Called Epsilon

When I set out to write this I reviewed some of Loren's past blogs to refresh my sense of what goes. When I read her blog on A Glimpse into Floating-Point Accuracy, Another Lesson in Floating Point, and Collinearity, I knew I was in familiar territory. The chances are, you too have had a brush with epsilon at some point in your programming past.

I had my first encounter with ulp (unit in the last place) and his accomplice eps when I was a graduate student in the early 90s. My advisor and I were working on an algorithm that was based on a Delaunay triangulation. The triangulation algorithm would often "fall over" due to numerical problems. I wasted effort trying to "fine tune" the tolerances to get our collection of datasets to triangulate successfully. As you know, this is like trying to push a wrinkle out of a fully-fitted carpet. Sure enough, before long my advisor would present a new dataset from a research collaborator and I was back in business. The frustrating part was the lack of information on how to deal with these failures, because they were rarely addressed in texts or technical publications. When I was finishing up at graduate school and thereafter things began to change for the better. Technical journals and conferences dealing with geometric computing began to solicit research papers on robustness. The papers that appeared through the years were encouraging and I had a reassuring sense that help was on the way.

Why Geometric Computing is Susceptible to Robustness Issues

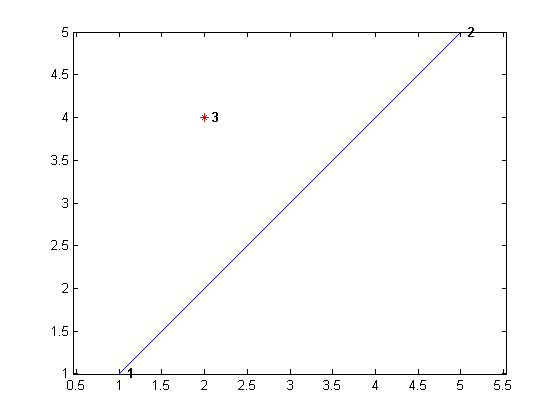

In Loren's blog on Collinearity, we realized the collinearity test for three points could viewed as computing the area of a triangle formed by the points and then checking for zero area. This reduced the geometric test to computing a determinant. In fact, this geometric test can also be used to tell whether a query point lies to the left or the right of an oriented line defined by two points. Here, give it a try . . .

p1 = [1 1];

p2 = [5 5];

p3 = [2 4];

plotPointLine(p1,p2,p3);

axis equal;

pointLineOrientation(p1,p2,p3)ans = LEFT

q1 = [1 1]; q2 = [5 5]; q3 = [4 2]; pointLineOrientation(q1,q2,q3)

ans = RIGHT

dbtype pointLineOrientation1 function orient = pointLineOrientation(p1,p2,p3) 2 mat = [p1(1)-p3(1) p1(2)-p3(2); ... 3 p2(1)-p3(1) p2(2)-p3(2)]; 4 detmat = det(mat); 5 if(detmat == 0) 6 orient = 'ON'; 7 else if(detmat < 0) 8 orient = 'RIGHT'; 9 else orient = 'LEFT'; 10 end 11 end 12

dbtype plotPointLine

1 function plotPointLine(p1,p2,p3)

2 P = [p1;p2;p3];

3 plot(P(1:2,1),P(1:2,2));

4 hold on;

5 plot(p3(1),p3(2),'*r')

6 ptlabels = arrayfun(@(n) {sprintf(' %d', n)}, (1:3)');

7 Hpl = text(P(:,1),P(:,2),ptlabels, 'FontWeight', 'bold');

If the point lies to the left of the line, the sign of the determinant will be positive – corresponding to a triangle of positive area. Conversely if the point lies on the other side. As we saw before, if the query point is on the line all three points are collinear and the determinant is zero. Many problems in geometric computing reduce to evaluating the sign of a determinant. The construction of a 2D Delaunay triangulation is based on two geometric tests. The point-line orientation test which we just saw, and a point-in-circle test which is used to decide if a query point is within the circumcircle defined by the three vertices of a triangle. It is surprising that you only need two simple geometric tests that you learned in high-school and some programming knowledge to write a 2D Delaunay triangulation algorithm. However, if you were to try this for real you would find things can sometimes go wrong – very wrong.

Robust Delaunay Triangulations

The integrity of the Delaunay triangulation algorithm hinges on the correct evaluation of the determinants used in the geometric tests. Problems arise when the determinant is ambiguously close to zero. Inconsistencies creep in due to the presence of numerical roundoff, orientation tests can return an incorrect outcome, and the algorithm can subsequently fail.

The Qhull computational geometry library, which was developed in the early 1990s, identified these numerical precision problems during the computation. Qhull communicated the problems to the user through a warning or error message, and provided some helpful interactive options to try to work around the issues.

For example, if we compute the Delaunay triangulation of the corner points of a unit square using the Qhull-based delaunayn method, a numerical precision warning is issued; this is because all four points are co-circular. Qhull provides an option to "workaround" these problems; the 'QJ' option adds noise to the points to avoid this degenerate scenario.

X = [0 0; 1 0; 1 1; 0 1]

tri = delaunayn(X, {'QJ'})X =

0 0

1 0

1 1

0 1

Warning: qhull precision warning:

The initial hull is narrow (cosine of min. angle is 1.0000000000000000).

A coplanar point may lead to a wide facet. Options 'QbB' (scale to unit box)

or 'Qbb' (scale last coordinate) may remove this warning. Use 'Pp' to skip

this warning. See 'Limitations' in http://www.qhull.org/html/qh-impre.htm

tri =

1 2 4

4 2 3

While this workaround is helpful, is not guaranteed to be robust and failure can still arise in practice. Fortunately, technology has progressed since the early 1990s.

In Loren's earlier blog on Collinearity, follow-up comments cited numerical inaccuracies associated with the computation of a determinant, and recommended the use of rand/SVD as being more numerically reliable. But, reducing numerical roundoff does not guarantee the correct outcome of the geometric test either. The implementation is still vulnerable to numerical failure. In the past decade researchers have focused attention on robustness issues like these and on robustly computing Delaunay triangulations in particular. Regarding Delaunay triangulations, the generally accepted solution is based on the notion of Exact Geometric Computing (EGC).

One reply posted on Loren's Collinearity blog highlighted this solution, but it didn't catch focus. The idea is based on evaluating the determinant using exact arithmetic. Since this is computationally expensive, a two-step process is applied; the determinant is computed using floating point arithmetic in the usual way and a corresponding error bound is worked out along with this computation. The error bound is used to filter out the ambiguous cases, and EGC is then applied to these cases to ensure a correct outcome.

In R2009a we adopted 2D and 3D Delaunay triangulations from the Computational Geometry Algorithms Library (CGAL) to provide more robust, faster, and memory-efficient solutions in MATLAB. CGAL employs EGC and floating point filters to guarantee numerical robustness. Let's see how EGC performs for our collinear test. We will use the new class DelaunayTri, which is based on CGAL, to experiment a little.

Collinear Test Revisited

Let's take a look at Loren's collinear test again, first choose 3 points we know are collinear. If we attempt to construct a Delaunay triangulation using DelaunayTri, then a triangle should not be formed because the three points are collinear.

format long

p1 = [1 1]; p2 = [7500 7500]; p3 = [750000 750000];Here's the test based on the determinant computation

collinear = (det([p2-p1 ; p3-p1]) == 0)

collinear =

1

and the test based on rank computation.

collinear = (rank ([p1 ; p2 ; p3]) < 2)

collinear =

1

P = [p1;p2;p3];

Here's the test based on EGC.

dt = DelaunayTri(P)

dt =

DelaunayTri

Properties:

Constraints: []

X: [3x2 double]

Triangulation: []

The Triangulation is empty [] since the points are collinear, this is what we expect. Now move point p1 by a small amount to make the three points non-collinear.

p1 = [1 1+eps(5)];

Here's the test based on the determinant computation

collinear = (det([p2-p1 ; p3-p1]) == 0) % Gives incorrect resultcollinear =

1

and the test based on rank computation.

collinear = (rank ([p1 ; p2 ; p3]) < 2) % Gives incorrect resultcollinear =

1

Finally, here's the EGC-based test.

P = [p1;p2;p3];

dt = DelaunayTri(P);

collinear = isempty(dt(:,:)) % Gives the correct resultcollinear =

0

In this instance a triangle was constructed which indicates the three points are not considered to be collinear. We know this is the correct assessment.

What Conclusions Can We Draw From This?

Well, EGC plays a very important role in the robust implementation of algorithms like Delaunay triangulations, Voronoi diagrams, and convex hulls. These algorithms have a long history of being notoriously susceptible to numerical precision issues.

This raises an interesting question; should we adopt exact geometric tests for general algorithm development within MATLAB? For example; should we be using exact collinear tests and exact point-line orientation tests and the like?

In general we should not, because the environment we are working in has finite precision. If we used EGC extensively we would realize that while it is numerically correct, it may fail to capture our programming intent. In the flow of an algorithm, we take the output from one step of the computation and pass it as input to the next.

What happens if we pass inexact input to an exact geometric test? Suppose we begin our computation with three collinear points, and next we apply a matrix of rotation to reorient the points in a particular direction. In doing so we introduce inaccuracies; will the exact geometric test right those inaccuracies? No, it will not; see for yourself.

p1 = [1 1]; p2 = [7500 7500]; p3 = [750000 750000]; P = [p1;p2;p3]; T = [cos(pi/3) sin(pi/3); -sin(pi/3) cos(pi/3)]; P2 = P*T; dt = DelaunayTri(P2); collinear = isempty(dt(:,:))

collinear =

0

A triangle was constructed from points {p1, p2, p3}, so in terms of EGC the three points are considered to be noncollinear.

While the outcome is technically correct it's probably not what you expected or hoped for. In the context of Delaunay triangulations and their applications, the consequences of imprecise input are relatively benign. EGC is a powerful tool for addressing a long-standing robustness problem in this domain.

In our programming world of finite precision a judicious choice of tolerance is generally sufficient to capture the correct behavior. Now, that just begs the question; what's a judicious choice of tolerance? What would be a suitable tolerance for use in the collinearity test, for example? Well, in this context, a good tolerance in one that captures the loss in the computation of the determinant in a dynamic or relative sense. It should work when the coordinates of the points are small and it should work when they are large. A predefined fixed value will not work over a wide range of coordinate values. When I compute a tolerance-sensitive determinant to be used in a geometric test, I examine the products that are produced during the expansion. I then choose the product with the largest magnitude and use it to scale eps.

For example, when computing the determinant of matrix [a b; c d], I choose the magnitude,

mag = max(abs(a*d), abs(b*c))

and then compute the relative tolerance,

relativeTol = eps(mag);

and I apply the tolerance as follows.

collinear = (abs(det([p2-p1 ; p3-p1]) <= relativeTol))

(For simplicity, the products a*d and b*c are not reused to compute det.)

The Bottom Line?

So, what's the point of telling you all about Exact Geometric Computing? Ohhh, I am hoping this overview gives you some insight into numerical issues in geometric computing. But the important point for you, the user, is you no longer need to worry about numerical robustness issues when constructing 2D/3D Delaunay triangulations, Voronoi diagrams or Convex hulls in MATLAB. Since other applications like nearest neighbor queries and scattered data interpolation are built on these features, you can expect robstness, performance improvements, and memory efficiency there as well. If you would like to learn more, check out the video and the Delaunay triangulation demo highlighting the new computational geometry features in R2009a. Follow these links for the reference pages for the new features:

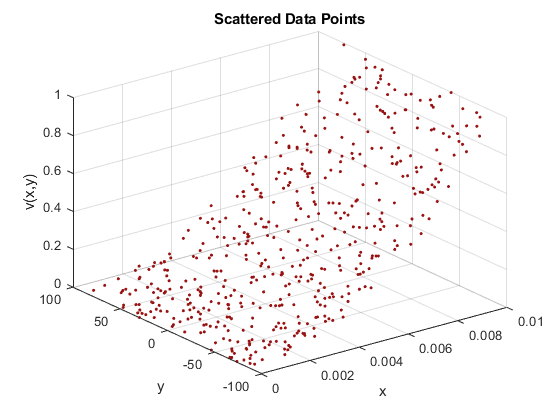

Coming up Next

Next week I will talk about an important application area of Delaunay triangulations in MATLAB, and that's Scattered Data Interpolation. We introduced new interpolation features in R2009a that improve usability, robustness, and performance, so stay tuned for more insight into that. In the meantime, please let me know your views, thoughts, and opinions here.

- Category:

- Computational Geometry,

- New Feature,

- Robustness

Comments

To leave a comment, please click here to sign in to your MathWorks Account or create a new one.